I began my research on the

Four Eyes Lab from the department of Computer Science and Media Art and Technologies, at UCSB.

The research focus is on the "four I's" of Imaging, Interaction, and Innovative Interfaces, and many project are being developed in the fields of:

- Augmented Reality

- Computer Vision, Pattern Recognition & Imaging

- Graphics, Visualization & Interaction

- Display Technologies

So, I was having a look at the main directions in which the research is going, beginning with

Augmented reality (AR) defined as "a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data."

AR is related to the concept of mediated reality that indicate the interactions with the real environment in which the perspective is modified by a computer.

AR opposes with virtual reality, where the environment is entirely virtual and replace the real world, is usually in real-time and in semantic context with environmental elements, and gives the possibility to overlay artificial informations on the real world.

Ar as many areas of applications like: archaeology, architecture, art, commerce, education, industrial design, medical, military workplace, sports & entertainment, tourism, support (like in assembly, maintenance, surgery, translation etc).

Some interesting works are:

-

Anywhere Augmentation a new term for the concept of building an AR system that will work in arbitrary environments with no prior preparation. The main goal of this work is to lower the barrier of broad acceptance for augmented reality by expanding beyond research prototypes that only work in prepared, controlled environments.

-

The City of Sights a design and implementation of a physical and virtual model of an imaginary urban scene, that can serve as a backdrop or “stage” particularly for AR.

-

Outdoor Modeling and Localization a system for modeling an outdoor environment using an omnidirectional camera and then continuously estimating a camera phone's position with respect to the model.

http://ilab.cs.ucsb.edu/images/stories/ ... ly_low.mov

I continued my review with

Computer vision that is " is a field that includes methods for acquiring, processing, analyzing, and understanding images and, in general, high-dimensional data from the real world in order to produce numerical or symbolic information, e.g., in the forms of decisions".

Two interesting works in this field are:

-

Composition Context Photography a study on the process of framing photographs in the very common scenario of a handheld point-and-shoot camera in automatic mode. It works by silently recording the composition context for a short period of time while a photograph is being framed,with thw aim of characterize typical framing patterns, collected in a big database.

-

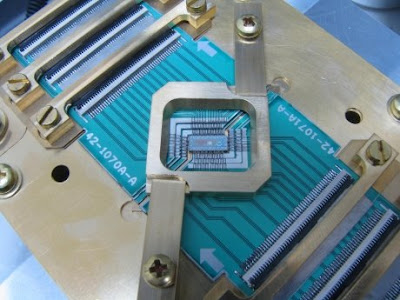

Mobile Computational Photography an emerging research area that aims to extend or enhance the capabilities of digital photography by adding computational elements to the imaging process.

In the area of Visualization my attention was captured by 2 projects:

-

Interactive Visualization of Uncertain Data in a Mouse's Retinal Astrocyte Image I didn't get exatly what it is about but the image was really cool.

-

WiGis: Web-based Interactive Graph Interfaces centered on highly interactive graphs in a user's web browse.

For what concern display technologies, the most interesing thing at UCSB is probably the allosphere, that i've described in the homework of wk5.

Others works implied with visualization I come across since i set foot in this campus are the projects of Markos Novak and his Transvergency lab, and the ones of Rene Weber using imaging and brain scanning to examine the effects of videogames.

http://ilab.cs.ucsb.edu/index.php/compo ... §ion=1

http://en.wikipedia.org/wiki/Augmented_reality

http://en.wikipedia.org/wiki/Computer_vision

http://en.wikipedia.org/wiki/Visualization

http://www.allosphere.ucsb.edu/

http://www.mat.ucsb.edu/~marcos/centrif ... covers_in/

http://www.dr-rene-weber.de/

http://ilab.cs.ucsb.edu/index.php/compo ... §ion=1

http://en.wikipedia.org/wiki/Augmented_reality

http://en.wikipedia.org/wiki/Computer_vision

http://en.wikipedia.org/wiki/Visualization

http://www.allosphere.ucsb.edu/

http://www.mat.ucsb.edu/~marcos/centrif ... covers_in/

http://www.dr-rene-weber.de/

. And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.

. And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.