After the hands-on lab using the cameras in processing, I wanted to better explore how the X-box Kinect camera works and the applications these types of cameras bring.

How the camera works is that it transmits a near-infrared light and measures the time it takes for the light to reach the camera again after being reflected off objects [1]. The time it takes for the light to be reflected back is proportional to the distance of the object, so the camera can use that to determine the depth of the object. This type of computation can result in these highly accurate 3D mappings of the objects in the field of view.

This is similar to how Lidar works, which is the remote sensing method that self-driving cars work. Lidar - Light Detection and Ranging - uses light pulses to measure the distance of objects to generate precise, 3D maps of the surrounding area.

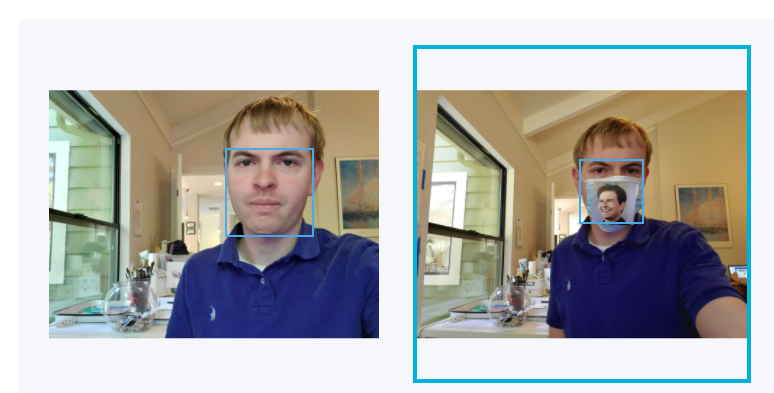

As can be seen from both of these images, both the IR camera sensing and Lidar give an accurate 3D model of the surrounding environment. But this is only one part of the process, and a small one at that. Generating these image environments is great, but how does the computer actually do things with them once they are created? For example, if we look at the last image, we, as humans, can see that 2 humans are crossing a crosswalk and (even though we cannot see it) the light is probably red, so the car should be stopped for these people to cross.

But the car doesn’t know that. All it sees are some objects moving across some place in space. This leads to an interesting idea, that even though with Lidar, the car can “see”, it can’t actually “understand” without human intervention. This is where image processing techniques and machine learning come into play for self-driving cars. As that is not the topic for this week, I will hold off on exploring these ideas more for a future week. With this topic coming up though, I want to pose a few questions that this brings.

Since self-driving cars rely on human input, it is to be expected that some human bias gets inherently embedded into the systems of image processing and machine learning that the self-driving cars use. In my past posts, I have brought up the idea of the “true image”, which is an image being a perfect representation of something happening at that moment. With Lidar and IR cameras, it would make sense that the images they produce are true images, as they are a perfect representation of the surrounding environment. But since a computer cannot “see”, we have to tell it what to look for and what things are and to make decisions based on biases we embed into it. This led me to question what exactly it means to call something a “true image”. Since we are all biased in our own ways, is there ever really a true image?

Works Cited

1.

https://www.wired.com/2010/11/tonights- ... bject%20is.

2.

https://oceanservice.noaa.gov/facts/lidar.html