Wk8 - Vision Lab on Campus

Wk8 - Vision Lab on Campus

Spend time going through the various sources such as department descriptions, labs, etc that you can find on the internet such as: http://www.ucsb.edu/, http://www.cnsi.ucsb.edu/, http://www.kitp.ucsb.edu/, and many other programs we have at UCSB.

Identity a research of possible interest to you. Provide a condensed documentation the way Hannah as done at:

http://www.mat.ucsb.edu/forum/viewtopic ... =202#p1213 using descriptions, images, URL links, other such research, etc.

Identity a research of possible interest to you. Provide a condensed documentation the way Hannah as done at:

http://www.mat.ucsb.edu/forum/viewtopic ... =202#p1213 using descriptions, images, URL links, other such research, etc.

George Legrady

legrady@mat.ucsb.edu

legrady@mat.ucsb.edu

-

hcboydstun

- Posts: 9

- Joined: Mon Oct 01, 2012 3:14 pm

Re: Wk8 - Vision Lab on Campus

This week I began research on two very different labs under UCSB’s umbrella of research centers: Center for Spintronics and Quantum Computation and Center form Polymers and Organic Solids.

Foremost, UCSB’s Center for Spintronics and Quantum Computation overviews a broad range of research from physics, material science, electrical reengineering and computer science to address new and rising problems in the fields of quantum computation and spin-based electronics.But first, what are quantum computation and spin-based electronics?

Quantum computation is another way to describe the actions of a quantum computer. Quantum computers simulate things that cannot be simulated on a conventional computer. For example special effects are a type of computer simulation, used on conventional computers, to alter real-life in a way that is less expensive to the producer (imagine if we actual blew up the white house in the movie Independence Day.) On a smaller scale, everyday activities, from eating to driving a car, can also be simulated in a conventional computer. Thus we call these type of simulation direct because they solve a linear problem.

In quantum computers, the problems are intractable, or hard to render into a simulation. It is this distinct difference in problem solving that makes quantum computers advantageous over conventional computers. Quantum computers work by exploiting what is called “quantum parallelism,’ or the idea that we can simultaneously explore many possible solutions to a problem. This does not mean that we necessarily run in a right or wrong answer, but rather the idea is just to collect a plethora of data.

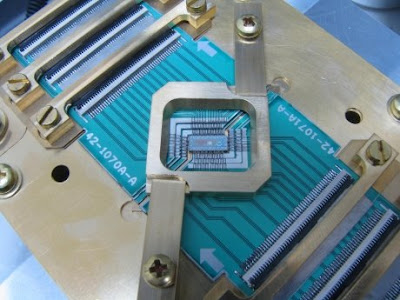

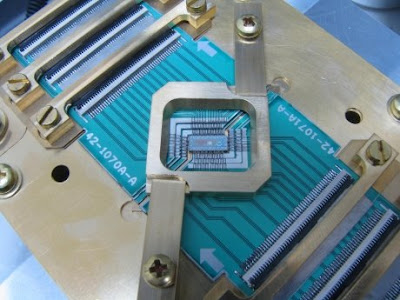

Quantum computers are built from ‘quantum bits’, which are quantum analogue of the bits, which make up conventional computers. These bits are then manipulated by shining a laser on them, and the manipulated atoms are collectively called a quantum gate. A sequence of quantum gates, done in a particular order, can be read like a program for a conventional computer. Yet, this program is faster and more efficient at translating information. [Below is a quantum computer, and yes it is really that small]

Furthermore, spintronics is a new field closely connected to quantum computation. University of New York at Buffalo offers a clear explanation of this connection:

“Conventional electronics relies on the electron’s charge for logic operations in computer microprocessors. In contrast, robust information storage in computer hard drives and high-capacity iPods can use another of electrons properties: its spin, rather than its charge…the electron spin is more elusive and is responsible for intriguing magnetic behavior in many materials.”

As further discussed, these magnets are able to retain their magnetic properties even when the device is off, and thus, are superior to the various memory applications we have now. In this sense, spintronics could help us create devices that run non a fraction of the power.

On the other hand, when I explored the Center form Polymers and Organic Solids, I became specifically interested in their studies of organic semi-conducting and light harvesting material. Organic semiconductors, literally, are organic materials with semiconducting properties. There are many advantages to produce devices using such technology. Generally, these devices are more affordable, because more organic materials are less expensive to generate than ‘highly crystalline’ inorganic semiconductors. Likewise, the use of organic materials does not use a high level of energy to work. In other words, while more devices require an ‘annealing step’ or the heating of the metal in order to create reactionary results, this is not the case with organic materials. Thus, organic semiconductors can also be used in a greater variety of machines; many plastics could not withstand the heat of annealing temperatures.

Light harvesting materials act within this same way. They use minimal amounts of trace material in order to produce mass amounts of energy. In some specific cases, researchers are using technology based on solar energy in order to create windows using transparent solar cells. The goal of this research is to develop new, energy efficient materials to be implemented into commercial use.

http://www.csqc.ucsb.edu/index.html

http://michaelnielsen.org/blog/quantum- ... -everyone/

http://en.wikipedia.org/wiki/Spintronics

http://www.scottaaronson.com/democritus/lec10.html

https://docs.google.com/viewer?a=v&q=ca ... PtvmGYLMZA

http://physics.usask.ca/~chang/homepage ... r_cell.gif

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://en.wikipedia.org/wiki/Organic_semiconductor

http://www.cpos.ucsb.edu/research/materials/

http://www.nature.com/nnano/focus/organics/index.html

http://physics.usask.ca/~chang/homepage ... ganic.html

Foremost, UCSB’s Center for Spintronics and Quantum Computation overviews a broad range of research from physics, material science, electrical reengineering and computer science to address new and rising problems in the fields of quantum computation and spin-based electronics.But first, what are quantum computation and spin-based electronics?

Quantum computation is another way to describe the actions of a quantum computer. Quantum computers simulate things that cannot be simulated on a conventional computer. For example special effects are a type of computer simulation, used on conventional computers, to alter real-life in a way that is less expensive to the producer (imagine if we actual blew up the white house in the movie Independence Day.) On a smaller scale, everyday activities, from eating to driving a car, can also be simulated in a conventional computer. Thus we call these type of simulation direct because they solve a linear problem.

In quantum computers, the problems are intractable, or hard to render into a simulation. It is this distinct difference in problem solving that makes quantum computers advantageous over conventional computers. Quantum computers work by exploiting what is called “quantum parallelism,’ or the idea that we can simultaneously explore many possible solutions to a problem. This does not mean that we necessarily run in a right or wrong answer, but rather the idea is just to collect a plethora of data.

Quantum computers are built from ‘quantum bits’, which are quantum analogue of the bits, which make up conventional computers. These bits are then manipulated by shining a laser on them, and the manipulated atoms are collectively called a quantum gate. A sequence of quantum gates, done in a particular order, can be read like a program for a conventional computer. Yet, this program is faster and more efficient at translating information. [Below is a quantum computer, and yes it is really that small]

Furthermore, spintronics is a new field closely connected to quantum computation. University of New York at Buffalo offers a clear explanation of this connection:

“Conventional electronics relies on the electron’s charge for logic operations in computer microprocessors. In contrast, robust information storage in computer hard drives and high-capacity iPods can use another of electrons properties: its spin, rather than its charge…the electron spin is more elusive and is responsible for intriguing magnetic behavior in many materials.”

As further discussed, these magnets are able to retain their magnetic properties even when the device is off, and thus, are superior to the various memory applications we have now. In this sense, spintronics could help us create devices that run non a fraction of the power.

On the other hand, when I explored the Center form Polymers and Organic Solids, I became specifically interested in their studies of organic semi-conducting and light harvesting material. Organic semiconductors, literally, are organic materials with semiconducting properties. There are many advantages to produce devices using such technology. Generally, these devices are more affordable, because more organic materials are less expensive to generate than ‘highly crystalline’ inorganic semiconductors. Likewise, the use of organic materials does not use a high level of energy to work. In other words, while more devices require an ‘annealing step’ or the heating of the metal in order to create reactionary results, this is not the case with organic materials. Thus, organic semiconductors can also be used in a greater variety of machines; many plastics could not withstand the heat of annealing temperatures.

Light harvesting materials act within this same way. They use minimal amounts of trace material in order to produce mass amounts of energy. In some specific cases, researchers are using technology based on solar energy in order to create windows using transparent solar cells. The goal of this research is to develop new, energy efficient materials to be implemented into commercial use.

http://www.csqc.ucsb.edu/index.html

http://michaelnielsen.org/blog/quantum- ... -everyone/

http://en.wikipedia.org/wiki/Spintronics

http://www.scottaaronson.com/democritus/lec10.html

https://docs.google.com/viewer?a=v&q=ca ... PtvmGYLMZA

http://physics.usask.ca/~chang/homepage ... r_cell.gif

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://en.wikipedia.org/wiki/Organic_semiconductor

http://www.cpos.ucsb.edu/research/materials/

http://www.nature.com/nnano/focus/organics/index.html

http://physics.usask.ca/~chang/homepage ... ganic.html

Last edited by hcboydstun on Wed Nov 14, 2012 9:33 pm, edited 1 time in total.

Re: Wk8 - Vision Lab on Campus

My final project will utilize very similar technology to Marie Sester in her piece EXPOSURE:

http://www.sester.net/exposure/

Sester is a French-American artist working primarily with digital technologies to create works that take on many forms but ultimately study ideological structures of how culture, politics and technology affect our spatial awareness in the world.

Her project consists of X-rayed vehicles composited with LiDAR scans of architecture. These different scanning techniques, used together with privately-owned spaces and vehicles, exhibits how technology can be used for surveillance in of the most personal and intrusive ways in society today. As Sester remarks in the description of her project, "... the images are beautiful as forms, yet violent because they deconstruct the pervasive nature of x-ray technology when used as a form of control."

The technology that she uses for the 3d scanning of her architectures is called LiDAR. LiDAR is an acronym for Light Detection And Ranging. It is an optical sensing technology that measures the distance to a target by illuminating the target with a laser. Typically, LiDAR is used in fields such as geomatics, archaeology, geography, geology, geomorphology, seismology, forestry, remote sensing, atmospheric physics and contour mapping.

This lidar (laser range finder) may be used to scan buildings, rock formations, etc., to produce a 3D model.

The top image shows the scanning mechanism; the middle image shows the laser's path through a basic scene; the bottom image shows the sensor's output, after conversion from polar to Cartesian coordinates.

There are a handful of major components that make up a LiDAR system. First, the light of the laser typically consists of wavelengths from about 10 micrometers to the 250 nanometers range that are reflected via backscattering (http://en.wikipedia.org/wiki/Backscatter). Next, the scanner and optics decide how quickly the scene or object is scanned. The receiver electronics, or the image sensor, is either a solid state photodetector or a photomultiplier. Lastly, LiDAR often utilizes positioning systems like GPS to determine the exact position and orientation of the device.

Sources:

http://www.sester.net/exposure/

http://en.wikipedia.org/wiki/LIDAR

http://www.sester.net/exposure/

Sester is a French-American artist working primarily with digital technologies to create works that take on many forms but ultimately study ideological structures of how culture, politics and technology affect our spatial awareness in the world.

Her project consists of X-rayed vehicles composited with LiDAR scans of architecture. These different scanning techniques, used together with privately-owned spaces and vehicles, exhibits how technology can be used for surveillance in of the most personal and intrusive ways in society today. As Sester remarks in the description of her project, "... the images are beautiful as forms, yet violent because they deconstruct the pervasive nature of x-ray technology when used as a form of control."

The technology that she uses for the 3d scanning of her architectures is called LiDAR. LiDAR is an acronym for Light Detection And Ranging. It is an optical sensing technology that measures the distance to a target by illuminating the target with a laser. Typically, LiDAR is used in fields such as geomatics, archaeology, geography, geology, geomorphology, seismology, forestry, remote sensing, atmospheric physics and contour mapping.

This lidar (laser range finder) may be used to scan buildings, rock formations, etc., to produce a 3D model.

The top image shows the scanning mechanism; the middle image shows the laser's path through a basic scene; the bottom image shows the sensor's output, after conversion from polar to Cartesian coordinates.

There are a handful of major components that make up a LiDAR system. First, the light of the laser typically consists of wavelengths from about 10 micrometers to the 250 nanometers range that are reflected via backscattering (http://en.wikipedia.org/wiki/Backscatter). Next, the scanner and optics decide how quickly the scene or object is scanned. The receiver electronics, or the image sensor, is either a solid state photodetector or a photomultiplier. Lastly, LiDAR often utilizes positioning systems like GPS to determine the exact position and orientation of the device.

Sources:

http://www.sester.net/exposure/

http://en.wikipedia.org/wiki/LIDAR

Last edited by rdouglas on Wed Nov 28, 2012 1:54 pm, edited 1 time in total.

-

dslachtman

- Posts: 5

- Joined: Mon Oct 01, 2012 3:23 pm

Re: Wk8 - Vision Lab on Campus

Aimee Jenkins and I are working on this project together. here is our lab of interest:

Many modern physical structures are affected by natural elements like gravity, temperature, aging and pressure. Professors at University of Southern California and University of California, Santa Barbara have been working on different methods to correct the damage to these structures.

USC Professor Philip Lubin “a method that actively and corrects imperfection in a structure that is designed to be precisely aligned.” The technology is called AMPS, standing for Adaptive Morphable Precision Structures system. It optimizes performance of structures such as buildings, telescopes and satellites by correcting the imperfections that occur from the elements previously mentioned. By using AMPS, engineers do not have to take extra design efforts to account for the inevitable decay of their products because they can count on a system that will fix the imperfections which happen over time. The system can work from as far as 100 meters, and efforts are being put forth to extend that distance to a kilometer and maybe even further.

We are relating this technology to art through the idea of symmetry in science, art technology. Symmetry and the asymmetrical guide our understanding of science as well as photography. We hope to connect the search for lack of symmetry, AMPS technology and photography into one cohesive project: photographing and recording asymmetry in machines in order to aid in the efforts put forth by the AMPS system.

We invision photography being used in the future to see what errors exist in machines and moveable structures, and using the AMPS technology to correct those errors. We see a future of an aircraft flying over a metropolis and spotting faulty structures and using the AMPS system to corrrect the errors before irreparable damage is done. In this way, we combine art, science, technology and city planning into one effort for structural symmetry.

http://www.me.ucsb.edu/projects/sites/m ... team12.pdf (example of an adaptive structure)

http://www.deepspace.ucsb.edu/people/fa ... esearchers

http://techtransfer.universityofcalifor ... 22163.html

Many modern physical structures are affected by natural elements like gravity, temperature, aging and pressure. Professors at University of Southern California and University of California, Santa Barbara have been working on different methods to correct the damage to these structures.

USC Professor Philip Lubin “a method that actively and corrects imperfection in a structure that is designed to be precisely aligned.” The technology is called AMPS, standing for Adaptive Morphable Precision Structures system. It optimizes performance of structures such as buildings, telescopes and satellites by correcting the imperfections that occur from the elements previously mentioned. By using AMPS, engineers do not have to take extra design efforts to account for the inevitable decay of their products because they can count on a system that will fix the imperfections which happen over time. The system can work from as far as 100 meters, and efforts are being put forth to extend that distance to a kilometer and maybe even further.

We are relating this technology to art through the idea of symmetry in science, art technology. Symmetry and the asymmetrical guide our understanding of science as well as photography. We hope to connect the search for lack of symmetry, AMPS technology and photography into one cohesive project: photographing and recording asymmetry in machines in order to aid in the efforts put forth by the AMPS system.

We invision photography being used in the future to see what errors exist in machines and moveable structures, and using the AMPS technology to correct those errors. We see a future of an aircraft flying over a metropolis and spotting faulty structures and using the AMPS system to corrrect the errors before irreparable damage is done. In this way, we combine art, science, technology and city planning into one effort for structural symmetry.

http://www.me.ucsb.edu/projects/sites/m ... team12.pdf (example of an adaptive structure)

http://www.deepspace.ucsb.edu/people/fa ... esearchers

http://techtransfer.universityofcalifor ... 22163.html

Re: Wk8 - Vision Lab on Campus

Name: EXPOSURE, 3d LIDAR DATA

Place: UCSB

EXPOSURE is an installation that uses large scale surveillance/x-ray imagery used to survey things like large semi trucks for smuggling. This large scale surviellance system can even x-ray through larger items such as buildings.

http://www.sester.net/exposure/

Place: UCSB

EXPOSURE is an installation that uses large scale surveillance/x-ray imagery used to survey things like large semi trucks for smuggling. This large scale surviellance system can even x-ray through larger items such as buildings.

http://www.sester.net/exposure/

Last edited by slpark on Sun Nov 18, 2012 3:02 pm, edited 1 time in total.

Re: Wk8 - Vision Lab on Campus

I began my research on the Four Eyes Lab from the department of Computer Science and Media Art and Technologies, at UCSB.

The research focus is on the "four I's" of Imaging, Interaction, and Innovative Interfaces, and many project are being developed in the fields of:

- Augmented Reality

- Computer Vision, Pattern Recognition & Imaging

- Graphics, Visualization & Interaction

- Display Technologies

So, I was having a look at the main directions in which the research is going, beginning with Augmented reality (AR) defined as "a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data."

AR is related to the concept of mediated reality that indicate the interactions with the real environment in which the perspective is modified by a computer.

AR opposes with virtual reality, where the environment is entirely virtual and replace the real world, is usually in real-time and in semantic context with environmental elements, and gives the possibility to overlay artificial informations on the real world.

Ar as many areas of applications like: archaeology, architecture, art, commerce, education, industrial design, medical, military workplace, sports & entertainment, tourism, support (like in assembly, maintenance, surgery, translation etc).

Some interesting works are:

- Anywhere Augmentation a new term for the concept of building an AR system that will work in arbitrary environments with no prior preparation. The main goal of this work is to lower the barrier of broad acceptance for augmented reality by expanding beyond research prototypes that only work in prepared, controlled environments.

- The City of Sights a design and implementation of a physical and virtual model of an imaginary urban scene, that can serve as a backdrop or “stage” particularly for AR.

- Outdoor Modeling and Localization a system for modeling an outdoor environment using an omnidirectional camera and then continuously estimating a camera phone's position with respect to the model.

http://ilab.cs.ucsb.edu/images/stories/ ... ly_low.mov

I continued my review with Computer vision that is " is a field that includes methods for acquiring, processing, analyzing, and understanding images and, in general, high-dimensional data from the real world in order to produce numerical or symbolic information, e.g., in the forms of decisions".

Two interesting works in this field are:

- Composition Context Photography a study on the process of framing photographs in the very common scenario of a handheld point-and-shoot camera in automatic mode. It works by silently recording the composition context for a short period of time while a photograph is being framed,with thw aim of characterize typical framing patterns, collected in a big database.

- Mobile Computational Photography an emerging research area that aims to extend or enhance the capabilities of digital photography by adding computational elements to the imaging process.

In the area of Visualization my attention was captured by 2 projects:

-Interactive Visualization of Uncertain Data in a Mouse's Retinal Astrocyte Image I didn't get exatly what it is about but the image was really cool.

- WiGis: Web-based Interactive Graph Interfaces centered on highly interactive graphs in a user's web browse.

For what concern display technologies, the most interesing thing at UCSB is probably the allosphere, that i've described in the homework of wk5.

Others works implied with visualization I come across since i set foot in this campus are the projects of Markos Novak and his Transvergency lab, and the ones of Rene Weber using imaging and brain scanning to examine the effects of videogames.

http://ilab.cs.ucsb.edu/index.php/compo ... §ion=1

http://en.wikipedia.org/wiki/Augmented_reality

http://en.wikipedia.org/wiki/Computer_vision

http://en.wikipedia.org/wiki/Visualization

http://www.allosphere.ucsb.edu/

http://www.mat.ucsb.edu/~marcos/centrif ... covers_in/

http://www.dr-rene-weber.de/

The research focus is on the "four I's" of Imaging, Interaction, and Innovative Interfaces, and many project are being developed in the fields of:

- Augmented Reality

- Computer Vision, Pattern Recognition & Imaging

- Graphics, Visualization & Interaction

- Display Technologies

So, I was having a look at the main directions in which the research is going, beginning with Augmented reality (AR) defined as "a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data."

AR is related to the concept of mediated reality that indicate the interactions with the real environment in which the perspective is modified by a computer.

AR opposes with virtual reality, where the environment is entirely virtual and replace the real world, is usually in real-time and in semantic context with environmental elements, and gives the possibility to overlay artificial informations on the real world.

Ar as many areas of applications like: archaeology, architecture, art, commerce, education, industrial design, medical, military workplace, sports & entertainment, tourism, support (like in assembly, maintenance, surgery, translation etc).

Some interesting works are:

- Anywhere Augmentation a new term for the concept of building an AR system that will work in arbitrary environments with no prior preparation. The main goal of this work is to lower the barrier of broad acceptance for augmented reality by expanding beyond research prototypes that only work in prepared, controlled environments.

- The City of Sights a design and implementation of a physical and virtual model of an imaginary urban scene, that can serve as a backdrop or “stage” particularly for AR.

- Outdoor Modeling and Localization a system for modeling an outdoor environment using an omnidirectional camera and then continuously estimating a camera phone's position with respect to the model.

http://ilab.cs.ucsb.edu/images/stories/ ... ly_low.mov

I continued my review with Computer vision that is " is a field that includes methods for acquiring, processing, analyzing, and understanding images and, in general, high-dimensional data from the real world in order to produce numerical or symbolic information, e.g., in the forms of decisions".

Two interesting works in this field are:

- Composition Context Photography a study on the process of framing photographs in the very common scenario of a handheld point-and-shoot camera in automatic mode. It works by silently recording the composition context for a short period of time while a photograph is being framed,with thw aim of characterize typical framing patterns, collected in a big database.

- Mobile Computational Photography an emerging research area that aims to extend or enhance the capabilities of digital photography by adding computational elements to the imaging process.

In the area of Visualization my attention was captured by 2 projects:

-Interactive Visualization of Uncertain Data in a Mouse's Retinal Astrocyte Image I didn't get exatly what it is about but the image was really cool.

- WiGis: Web-based Interactive Graph Interfaces centered on highly interactive graphs in a user's web browse.

For what concern display technologies, the most interesing thing at UCSB is probably the allosphere, that i've described in the homework of wk5.

Others works implied with visualization I come across since i set foot in this campus are the projects of Markos Novak and his Transvergency lab, and the ones of Rene Weber using imaging and brain scanning to examine the effects of videogames.

http://ilab.cs.ucsb.edu/index.php/compo ... §ion=1

http://en.wikipedia.org/wiki/Augmented_reality

http://en.wikipedia.org/wiki/Computer_vision

http://en.wikipedia.org/wiki/Visualization

http://www.allosphere.ucsb.edu/

http://www.mat.ucsb.edu/~marcos/centrif ... covers_in/

http://www.dr-rene-weber.de/

Last edited by giovanni on Tue Nov 20, 2012 2:21 am, edited 4 times in total.

Bilayer Disparity Remapping for 3D Video Communications

Working with Ashley Fong

In the Electrical and Computer Engineering department Stephen Mangiat and Jerry Gibson have both been working on Disparity Remapping for Handheld 3D Video Communications. Their goal is to enhance mobile video-conferencing into 3D perception.

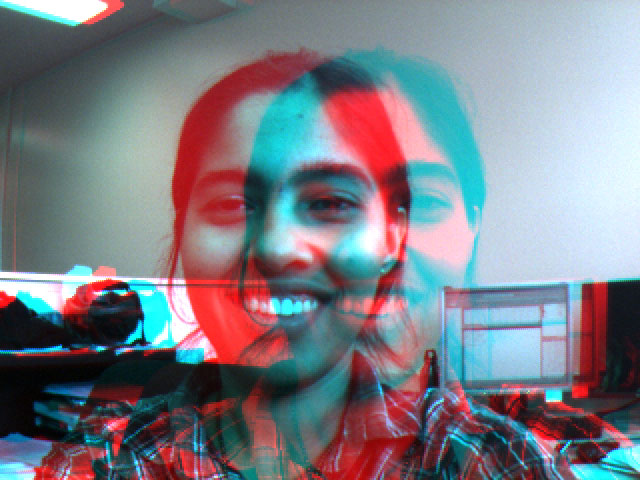

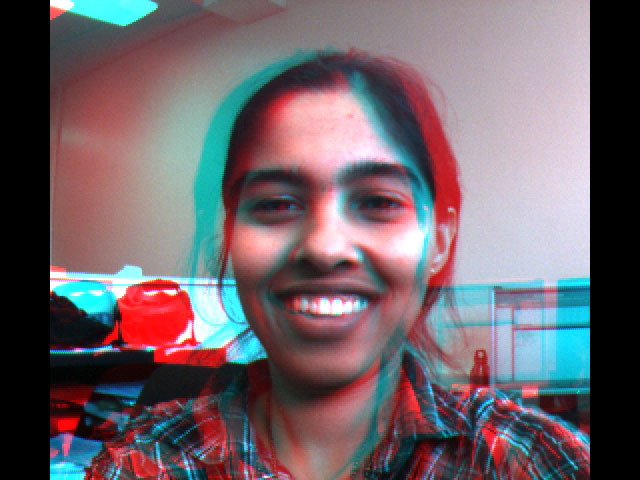

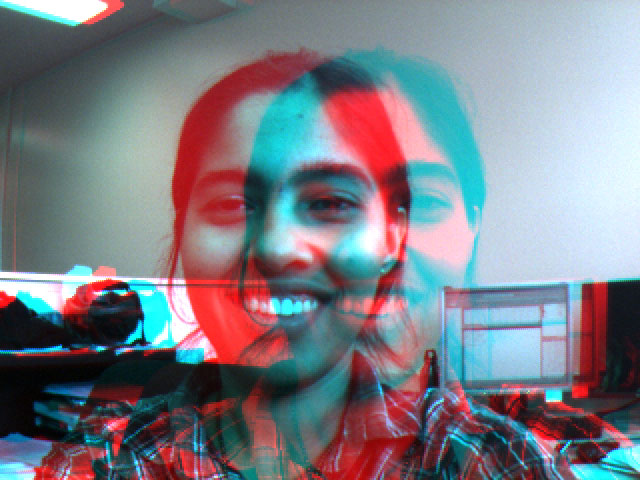

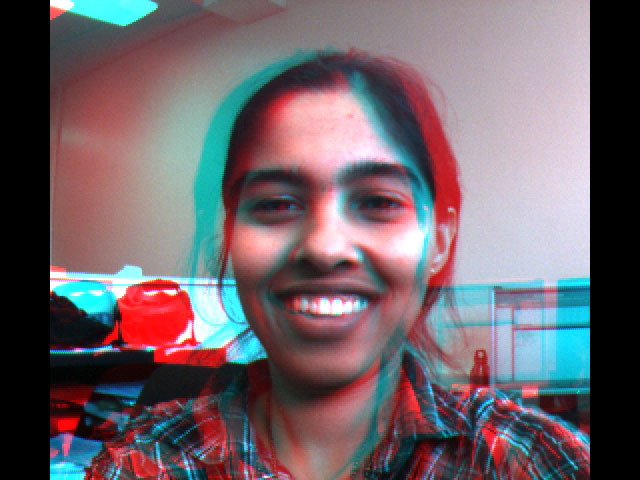

The brain can reconstruct 3D volumes from 2D imagery using various monoscopic {mono=one; video signal} depth cues. Stereoscopy, 3D imaging, is able to ease this process by presenting a fundamental optical depth cue. A fundamental optical depth cue is depth perception—when your brain is able to perceive lines that are not usually there like so: . And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.

. And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.

Most 3D displays require glasses which should in a sense become obsolete when handheld autostereoscopic should rid the user of wearing specialized glasses. The difficulty facing this is the comfort zone of the eyes and 3D perception. Pupils tend to adjust to focus light from the depth they are in. Stereoscopis, 3D imaging, is broken as the person has to focus on the light at the distance of the display. This disconnection then causes discomfort and fatigue.

To avoid discomfort would mean having to reduce the stereo baseline (the distance of the two points where the two images converge—the blue and red image) which reduces the 3D affect, when the stereo baseline is reduced the viewer sees more of a “cardboard cutout,” 2D.

Video quality is also dependent upon the users’ camera/webcam. The information processed in 3D format will also be necessary to get a great visual of the 3D affect. The brain might not be able to perceive the lines of the window edges that are missing causing the brain to be confused.

Objects at a farther depth will appear in the same location in each image thus objects must appear in front of the display so images can be shifted in post-processing.

Current 3D handheld devices automatically shift by minimizing the difference between the images (blue and red), converging the two images by what is the largest on-screen object. This method is then prone to jitter. And so Mangiat and Gibson want to remap binocular disparity, the difference in image location of an object seen by left and right eyes. The object nearest to the camera should always be placed on the screen and calculated by a dense disparity map feeding back the largest disparity. “Humans can tolerate changes in convergence, as long the speed of change is limited.”

The image below shows the shifted output and adjustments of disparities. The images converge onto the nose and the rest recedes behind the display, which then provides the most comfortable viewing experience on a handheld device.

This idea allows for a certain algorithm to eliminate uncomfortable disparities, breaking the 3D view by shifting the view.

http://vivonets.ece.ucsb.edu/handheld3d.html

http://vivonets.ece.ucsb.edu/mangiat_espa.pdf

In the Electrical and Computer Engineering department Stephen Mangiat and Jerry Gibson have both been working on Disparity Remapping for Handheld 3D Video Communications. Their goal is to enhance mobile video-conferencing into 3D perception.

The brain can reconstruct 3D volumes from 2D imagery using various monoscopic {mono=one; video signal} depth cues. Stereoscopy, 3D imaging, is able to ease this process by presenting a fundamental optical depth cue. A fundamental optical depth cue is depth perception—when your brain is able to perceive lines that are not usually there like so:

. And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.

. And so with our brain’s ability of having a sense of depth perception faces can be greatly visualized in 3D as the mind naturally expects curvatures and facial structures to be there.Most 3D displays require glasses which should in a sense become obsolete when handheld autostereoscopic should rid the user of wearing specialized glasses. The difficulty facing this is the comfort zone of the eyes and 3D perception. Pupils tend to adjust to focus light from the depth they are in. Stereoscopis, 3D imaging, is broken as the person has to focus on the light at the distance of the display. This disconnection then causes discomfort and fatigue.

To avoid discomfort would mean having to reduce the stereo baseline (the distance of the two points where the two images converge—the blue and red image) which reduces the 3D affect, when the stereo baseline is reduced the viewer sees more of a “cardboard cutout,” 2D.

Video quality is also dependent upon the users’ camera/webcam. The information processed in 3D format will also be necessary to get a great visual of the 3D affect. The brain might not be able to perceive the lines of the window edges that are missing causing the brain to be confused.

Objects at a farther depth will appear in the same location in each image thus objects must appear in front of the display so images can be shifted in post-processing.

Current 3D handheld devices automatically shift by minimizing the difference between the images (blue and red), converging the two images by what is the largest on-screen object. This method is then prone to jitter. And so Mangiat and Gibson want to remap binocular disparity, the difference in image location of an object seen by left and right eyes. The object nearest to the camera should always be placed on the screen and calculated by a dense disparity map feeding back the largest disparity. “Humans can tolerate changes in convergence, as long the speed of change is limited.”

The image below shows the shifted output and adjustments of disparities. The images converge onto the nose and the rest recedes behind the display, which then provides the most comfortable viewing experience on a handheld device.

This idea allows for a certain algorithm to eliminate uncomfortable disparities, breaking the 3D view by shifting the view.

http://vivonets.ece.ucsb.edu/handheld3d.html

http://vivonets.ece.ucsb.edu/mangiat_espa.pdf

Last edited by rosadiaz on Mon Nov 26, 2012 9:04 pm, edited 2 times in total.

Re: Wk8 - Vision Lab on Campus

Research for Final Project Art130:

The research I am doing for the final project is centered on brain imaging and reconstruction.

UCSB Brain Imaging Center, University of California, Santa Barbara

http://www.psych.ucsb.edu/news/bic_01-2004.php

At UCSB there is a research center for studying “mind science”, or more fully explained as “the study of psychological, biological, and computational processes that govern mental function.” This research facility uses MRI’s to conduct research on different functions of the brain. Some of the studies currently taking place focus on the neural basis for memory, spatial cognition, navigation, fluent speech and stuttering, learning, motivated behaviors, the individual differences in brain functions, altered brain functions as occurs with autism and other learning disabilities. There is also research being done on virtual realities and psychological immersion, image analysis for brain imaging, and brain-computer interfaces.

The human brain is a complex and fascinating structure, and research on it is being done all over the world. More and more the link between computers and human brains is growing stronger, and research is being done on all fronts to make the two even less distinguishable. The possibilities for brain imaging today make it possible to study the mysteries of the brain more closely, and to understand how it works more fully.

Research at UC Berkeley has led to the reconstruction of the projections inside your brain, closing the gap between the “movie” of life that you watch through your own eyes and the movies you see at the theater. This youtube clip and article explain how this is done, and the implications of this research. This research could lead to many new developments including psychotherapy, dream analysis, communication with those who cannot communicate, and so on. With the use of MRI technology and innovation, there are many new visualization opportunities for what we have never been able to see before– what is going on inside the brain. As highly visual creatures, this is certainly important to the future.

http://newscenter.berkeley.edu/2011/09/22/brain-movies/

http://www.youtube.com/watch?v=6FsH7RK1 ... e=youtu.be

The research I am doing for the final project is centered on brain imaging and reconstruction.

UCSB Brain Imaging Center, University of California, Santa Barbara

http://www.psych.ucsb.edu/news/bic_01-2004.php

At UCSB there is a research center for studying “mind science”, or more fully explained as “the study of psychological, biological, and computational processes that govern mental function.” This research facility uses MRI’s to conduct research on different functions of the brain. Some of the studies currently taking place focus on the neural basis for memory, spatial cognition, navigation, fluent speech and stuttering, learning, motivated behaviors, the individual differences in brain functions, altered brain functions as occurs with autism and other learning disabilities. There is also research being done on virtual realities and psychological immersion, image analysis for brain imaging, and brain-computer interfaces.

The human brain is a complex and fascinating structure, and research on it is being done all over the world. More and more the link between computers and human brains is growing stronger, and research is being done on all fronts to make the two even less distinguishable. The possibilities for brain imaging today make it possible to study the mysteries of the brain more closely, and to understand how it works more fully.

Research at UC Berkeley has led to the reconstruction of the projections inside your brain, closing the gap between the “movie” of life that you watch through your own eyes and the movies you see at the theater. This youtube clip and article explain how this is done, and the implications of this research. This research could lead to many new developments including psychotherapy, dream analysis, communication with those who cannot communicate, and so on. With the use of MRI technology and innovation, there are many new visualization opportunities for what we have never been able to see before– what is going on inside the brain. As highly visual creatures, this is certainly important to the future.

http://newscenter.berkeley.edu/2011/09/22/brain-movies/

http://www.youtube.com/watch?v=6FsH7RK1 ... e=youtu.be

-

amandajackson

- Posts: 9

- Joined: Mon Oct 01, 2012 3:17 pm

Re: Wk8 - Vision Lab on Campus

http://www.iwrms.uni-jena.de/~c5hema/pu ... ldetal.pdf

Research in the Geography department includes the monitoring and mapping of remotely sensed data. By using infrared cameras, researchers are able to differentiate between buildings, vegetation, and water in both suburban and urban areas. Because vegetation gives off a bright reflectance in infrared, false color images, researchers are able to see the differences between vegetation types. There are two general category of trees: conifers and deciduous.

"Deciduous trees take a big nutrient boost to produce hundreds or thousands of new leafy suncatchers. In fact, they collect an enormous amount of sunlight compared to their coniferous cousins, meaning that they can photosynthesize at high rates through the warm season. Then, when autumn rolls around, the leaves can be dropped essentially on top of the tree’s roots, where they’ll recharge the soil with nutrients after composting. During the winter, most of the energy in the tree moves into its roots and it has a vastly reduced need for food, water, and growth during that time.

Coniferous trees (cone-bearing, as opposed to singular seed-bearing), on the other hand, are typically evergreen. They retain their needles year-round, replacing them slowly throughout the year rather than all at once. Needles have a variety of benefits: they are smaller, more watertight and more windproof, and can photosynthesize all year long. Needles don’t collect a lot of sunlight themselves, but overall the tree can continue photosynthesizing at a reduced rate whenever sunlight is available during winter months. Needles, with their reduced surface area, are harder to destroy and less tasty to insects. Since conifers don’t drop all their needles at once, they don’t need a big nutrient boost in the spring – which is good, because conifers typically inhabit areas with poor soils and less water than their deciduous cousins."

Because conifers and deciduous trees are grown in different soil types, researches in geography are able to use the reflectance rates from satellite, infrared imagery to map and monitor the soil different soil types and the balance of nutrients throughout the changing seasons of the year.

-In false infrared images, the Blue filter is eliminated, forcing each color to be represented by the next colored filter and having the infrared waves represented in red/magenta. The tone depends on the health and nutrition levels of the vegetation: darker being more healthy.

To translate this research to an art installation, I want to display a map of the united states with a color scale representing the differing health grades of the soil types based on the reflectance of the trees. A map similar to the idea of weather maps would represent the soil changes across the states. To examine the specific changes of vegetation, soil types and tree types you would zoom in on the desired area.

To obtain these statistics, a series of photos would be taken twice a year, once in the spring and once in the winter. In spring all trees would have leaves/needles with a high rate of photosynthesis, so all trees would show bright reflectance. However, in the winter only coniferous trees would show reflectance while deciduous trees will not because they lose their leaves in winter and do not perform photosynthesis.

The two photos will then be overlayed one another to show the change from the seasons: Coniferous trees wouldn't have a significant change like the deciduous.

Resources:

Research in the Geography department includes the monitoring and mapping of remotely sensed data. By using infrared cameras, researchers are able to differentiate between buildings, vegetation, and water in both suburban and urban areas. Because vegetation gives off a bright reflectance in infrared, false color images, researchers are able to see the differences between vegetation types. There are two general category of trees: conifers and deciduous.

"Deciduous trees take a big nutrient boost to produce hundreds or thousands of new leafy suncatchers. In fact, they collect an enormous amount of sunlight compared to their coniferous cousins, meaning that they can photosynthesize at high rates through the warm season. Then, when autumn rolls around, the leaves can be dropped essentially on top of the tree’s roots, where they’ll recharge the soil with nutrients after composting. During the winter, most of the energy in the tree moves into its roots and it has a vastly reduced need for food, water, and growth during that time.

Coniferous trees (cone-bearing, as opposed to singular seed-bearing), on the other hand, are typically evergreen. They retain their needles year-round, replacing them slowly throughout the year rather than all at once. Needles have a variety of benefits: they are smaller, more watertight and more windproof, and can photosynthesize all year long. Needles don’t collect a lot of sunlight themselves, but overall the tree can continue photosynthesizing at a reduced rate whenever sunlight is available during winter months. Needles, with their reduced surface area, are harder to destroy and less tasty to insects. Since conifers don’t drop all their needles at once, they don’t need a big nutrient boost in the spring – which is good, because conifers typically inhabit areas with poor soils and less water than their deciduous cousins."

Because conifers and deciduous trees are grown in different soil types, researches in geography are able to use the reflectance rates from satellite, infrared imagery to map and monitor the soil different soil types and the balance of nutrients throughout the changing seasons of the year.

-In false infrared images, the Blue filter is eliminated, forcing each color to be represented by the next colored filter and having the infrared waves represented in red/magenta. The tone depends on the health and nutrition levels of the vegetation: darker being more healthy.

To translate this research to an art installation, I want to display a map of the united states with a color scale representing the differing health grades of the soil types based on the reflectance of the trees. A map similar to the idea of weather maps would represent the soil changes across the states. To examine the specific changes of vegetation, soil types and tree types you would zoom in on the desired area.

To obtain these statistics, a series of photos would be taken twice a year, once in the spring and once in the winter. In spring all trees would have leaves/needles with a high rate of photosynthesis, so all trees would show bright reflectance. However, in the winter only coniferous trees would show reflectance while deciduous trees will not because they lose their leaves in winter and do not perform photosynthesis.

The two photos will then be overlayed one another to show the change from the seasons: Coniferous trees wouldn't have a significant change like the deciduous.

Resources:

-

erikshalat

- Posts: 9

- Joined: Mon Oct 01, 2012 3:10 pm

Re: Wk8 - Vision Lab on Campus

The lab I chose to take inspiration from is the Four Eyes Lab and their research project called "Anywhere Augmentation". The idea is a natural extension from the technology for augmented reality- augmented reality is basically the secondary, digital work that we have grafted on to our own physical one. For example, if you have scanned a QR code before and had an image pop out on whatever interface you scanned it from, that is augmented reality.

The problem with augmented reality is that it is typically contained to a small, controlled environment. To make things that interact with augmented reality, like a QR code or anything that can be scanned, they have to be attached to a physical surface which means it works best with small things like cards or magazines.

Anywhere Augmentation is designed to bring Augmented Reality everywhere, even in non-constructed environments.

The project is designed to work with wearable augmented reality technology, so things like special glasses that can pick up augmented reality signals. Four-Eyes Lab is looking into developing a graphical overlay that will map itself to the outside environment, making the world capable of utilizing a.r. They accomplish this by using satellites in the "GPS Constellation". This constellation is the system of several satellites orbiting the earth.

There are actually three separate research projects going into Anywhere Augmentation. GroundCam, which is an inexpensive tracking camera which can track inertia and direction of movement in figures through a series of advanced data analyses. HandyAR is a motion tracker that works on peoples hands, being able to recognize fingertips. The third sub-project is called "Evaluating Display Types for AR Selection and Annotation" which examines and compares AR devices on phones to those wearable around the head in the form of helmets or goggles.

Sources:

http://ilab.cs.ucsb.edu (Four Eyes Lab)

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/28 (Anywhere Augmentation)

http://www.un-spider.org/about-us/news/ ... nefits-gps (Satellite Constellation Image)

http://ilab.cs.ucsb.edu/projects/sdiver ... ndcam.html (Ground Cam)

http://ilab.cs.ucsb.edu/projects/taehee ... index.html (HandyAR)

The problem with augmented reality is that it is typically contained to a small, controlled environment. To make things that interact with augmented reality, like a QR code or anything that can be scanned, they have to be attached to a physical surface which means it works best with small things like cards or magazines.

Anywhere Augmentation is designed to bring Augmented Reality everywhere, even in non-constructed environments.

The project is designed to work with wearable augmented reality technology, so things like special glasses that can pick up augmented reality signals. Four-Eyes Lab is looking into developing a graphical overlay that will map itself to the outside environment, making the world capable of utilizing a.r. They accomplish this by using satellites in the "GPS Constellation". This constellation is the system of several satellites orbiting the earth.

There are actually three separate research projects going into Anywhere Augmentation. GroundCam, which is an inexpensive tracking camera which can track inertia and direction of movement in figures through a series of advanced data analyses. HandyAR is a motion tracker that works on peoples hands, being able to recognize fingertips. The third sub-project is called "Evaluating Display Types for AR Selection and Annotation" which examines and compares AR devices on phones to those wearable around the head in the form of helmets or goggles.

Sources:

http://ilab.cs.ucsb.edu (Four Eyes Lab)

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/28 (Anywhere Augmentation)

http://www.un-spider.org/about-us/news/ ... nefits-gps (Satellite Constellation Image)

http://ilab.cs.ucsb.edu/projects/sdiver ... ndcam.html (Ground Cam)

http://ilab.cs.ucsb.edu/projects/taehee ... index.html (HandyAR)

Last edited by erikshalat on Tue Nov 20, 2012 12:35 am, edited 2 times in total.