Wk10 - Final Project presentation Here

Wk10 - Final Project presentation Here

Final Project is a forum document consisting of:

Title

Description:

1) Science/Vision/Technology researched

2) Translation into Art: What are you proposing to translate the science into art

3) Museum presentation: What will the public see/experience

REferences: URLS

Make sure to include illustrations, found images, doodles, links to sound, videos, etc.

1. How will the work be situated, which space

2. How will it be represented, physical presence, monitors, some direct connection to the lab, etc.

3. What are the key components to making the project understandable

4. What is the "story", or context?

Title

Description:

1) Science/Vision/Technology researched

2) Translation into Art: What are you proposing to translate the science into art

3) Museum presentation: What will the public see/experience

REferences: URLS

Make sure to include illustrations, found images, doodles, links to sound, videos, etc.

1. How will the work be situated, which space

2. How will it be represented, physical presence, monitors, some direct connection to the lab, etc.

3. What are the key components to making the project understandable

4. What is the "story", or context?

George Legrady

legrady@mat.ucsb.edu

legrady@mat.ucsb.edu

-

hcboydstun

- Posts: 9

- Joined: Mon Oct 01, 2012 3:14 pm

Re: Wk10 - Final Project presentation Here

In summation of my final project, I chose to research and create my art installment surrounding the ideas of two of UCSB’s research centers: Center for Spintronics and Quantum Computation and Center form Polymers and Organic Solids.

To begin my research I explored Quantum computation, which is another way to describe the actions of a quantum computer. Quantum computers simulate things that cannot be simulated on a conventional computer. In quantum computers, the problems are intractable, or hard to render into a simulation. It is this distinct difference in problem solving that makes quantum computers advantageous over conventional computers. Quantum computers work by exploiting what is called “quantum parallelism,’ or the idea that we can simultaneously explore many possible solutions to a problem. This does not mean that we necessarily run in a right or wrong answer, but rather the idea is just to collect a plethora of data.

Quantum computers are built from ‘quantum bits’, which are quantum analogue of the bits, which make up conventional computers. These bits are then manipulated by shining a laser on them, and the manipulated atoms are collectively called a quantum gate. A sequence of quantum gates, done in a particular order, can be read like a program for a conventional computer. Yet, this program is faster and more efficient at translating information.

Hence, the quantum computer (in the most simplest of terms) seeks to pose the question: how do we get smaller processors to have bigger storage units and produce more energy than the machine is run on. In other words, how do we create a more efficient and energy conserving machine that fits in the palm of your hand.

Furthermore, I researched UCSB’s studies of organic semi-conducting and light harvesting material. Organic semiconductors, literally, are organic materials with semiconducting properties. There are many advantages to produce devices using such technology, including affordability, and more efficient energy usage. Light harvesting materials act within this same way. They use minimal amounts of trace material in order to produce mass amounts of energy. In some specific cases, researchers are using technology based on solar energy in order to create windows using transparent solar cells. The idea behind the transparent solar panel is one that I have specifically used within my project. The material that creates these solar panel is a type of thin film, composed of a semiconducting polymer that forms a soccer-ball-shaped molecule pattern. When applied to a surface, the this material self-assembles in a repeating pattern of micron-sized hexagonal-shaped cells (resembling a honeycomb over a relatively large area.) Yet, this polymer is translucent because the polymer chains only 'clump' together at the edges of each hexagon-shaped molecule and remain thin across the centers. The densely packed edges strongly absorb light and could facilitate electrical conductivity, although from the naked eye, the material itself appears to be clear. The goal of this research is to develop new, energy efficient materials to be implemented into commercial use.

From all of this information, I based my project off of a very basic concept that combined both quantum computers and light harvesting materials. Simplistically, a quantum of energy is a specific amount of energy. Light sources such as lasers emit light in units called photons. Now, since light is a form of energy that creates wave (hence the phrase ‘wavelengths’ of light), photons are then particles that carry a certain amount, or 'quantum', of energy depending on its’ wavelength.

Taking these simplistic ideas I generated the following idea for a gallery presentation: The gallery will be empty and dimly lit with the exception of one skylight in the ceiling. Using a translucent solar pane to cover the floor, I will collect solar energy through the skylight in the gallery. From my research, I discovered that thinner solar cells retain the absorption like a thicker device, and have a higher voltage. Thus, the thin solar panel will be able to harvest a high voltage light, i.e. a dim sort of laser. Connected to the solar panel will be a transmitter that will harvest this energy and send it to a projector attached to the ceiling of the exhibit. Inside the projector, a quantum computer will be ‘reading’ the energy signals and simultaneously emitting photons. From the projector then, this laser (created from the solar-harvested sunlight) will point directly at one of four silicon-canvases placed on opposite walls of the exhibit.

From my previous research, I concluded that silicon is semi-reactant material to the laser, and thus would illustrate the most movement from the photons that are transmitted upon it. Thus, the laser will serve to activate the silicon’s photons, and map the photons specific movements across the first canvas. Although technically the laser’s effects are only easily observable at both the macroscopic level, I will be assuming that these small movements and patterns of photos can be visible to the naked eye (for the sake of the audience being able to witness these events within a gallery space.)

This brings me to the core artistic concept. By channeling energy at high velocities across the first canvas, this canvas will be reactant and emit the laser off onto the next canvas. The idea behind this photon movement is called quantum teleportation. When photons becomes entangled (entanglement means that the properties of the photons, such as their polarization, are much more strongly correlated than is possible in classical physics), and then a laser is used to fire one of the photos, it is possible to transmit the laser back and forth from canvas to canvas. This is because when the quantum state of one photon is altered, the quantum state of the second photon is immediately altered, faster than the speed of light. In essence, each canvas would read the mind of the last, creating a dazzling display of photon waves as the laser navigates to each canvas.

SEE IT WORK (the video simulation I have made):

Interestingly, I decided to use the skylight and solar panel as part of this project because, depending on time of day, the emitting light from the laser and the photon arrangement will be of varying degrees. This means that at any given time of the day, the patterns displayed on each of the canvases are in constant change. For example, imagine if a cloud were to momentarily block the direct sunlight onto the solar panel. This would diminish and/or weaken the laser, causing there to be less photons projected onto each canvas and the simultaneous pattern of the photons to change. (I originally derived this idea based on work by James Turrell who uses skylights and light manipulation within his exhibits.)

Hence, my idea for this project spans beyond merely introducing a scientific medium into the art gallery. It has been an issue through my readings and research of creating work that is sustainable in the future. By using light harvested materials to power computers (whether that be quantum computers as I have suggested, or convention computers for the time being) we are creating a respective full-circle of energy saving: By using light harvesting materials, we obtain more energy from the sun than is used to operate the solar panel. If this light is then channeled to powering computer operating systems, we cut out the need of electrical power circuit systems. More so, if devices such as the quantum computer also output more energy than is input, then we are generating a high velocity of power without the negative effects of electrical energy consumption. Likewise, with the advancements in quantum teleportation, we would be able to run all of our computers off of one generated energy source, sending energy out across space to billions of computers worldwide. While this seems far-fetched, introducing these sciences to the public in an understandable way would allow for the public to be informed and involved in these energy saving options.

Photo links: in order shown

http://www.popsci.com/science/article/2 ... m-computer

http://www.redorbit.com/news/technology ... ep-closer/

http://www.gizmag.com/sphelar-cells-are ... re/112733/

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://qis.ucalgary.ca/QO/wolfgang.jpg

http://themindlesseye.blogspot.com/2010 ... space.html

Information sources :(not in order)

http://www.csqc.ucsb.edu/index.html

http://michaelnielsen.org/blog/quantum- ... -everyone/

http://en.wikipedia.org/wiki/Spintronics

http://www.scottaaronson.com/democritus/lec10.html

https://docs.google.com/viewer?a=v&q=ca ... PtvmGYLMZA

http://physics.usask.ca/~chang/homepage ... r_cell.gif

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://en.wikipedia.org/wiki/Organic_semiconductor

http://www.cpos.ucsb.edu/research/materials/

http://www.nature.com/nnano/focus/organics/index.html

http://physics.usask.ca/~chang/homepage ... ganic.html

http://www.pveducation.org/pvcdrom/desi ... t-trapping

http://news.discovery.com/tech/solar-po ... 10919.html

http://en.wikipedia.org/wiki/Photon

http://simple.wikipedia.org/wiki/Wave-particle_duality

http://www.zamandayolculuk.com/cetinbal ... EPORTb.htm

http://qis.ucalgary.ca/QO/wolfgang.jpg

To begin my research I explored Quantum computation, which is another way to describe the actions of a quantum computer. Quantum computers simulate things that cannot be simulated on a conventional computer. In quantum computers, the problems are intractable, or hard to render into a simulation. It is this distinct difference in problem solving that makes quantum computers advantageous over conventional computers. Quantum computers work by exploiting what is called “quantum parallelism,’ or the idea that we can simultaneously explore many possible solutions to a problem. This does not mean that we necessarily run in a right or wrong answer, but rather the idea is just to collect a plethora of data.

Quantum computers are built from ‘quantum bits’, which are quantum analogue of the bits, which make up conventional computers. These bits are then manipulated by shining a laser on them, and the manipulated atoms are collectively called a quantum gate. A sequence of quantum gates, done in a particular order, can be read like a program for a conventional computer. Yet, this program is faster and more efficient at translating information.

Hence, the quantum computer (in the most simplest of terms) seeks to pose the question: how do we get smaller processors to have bigger storage units and produce more energy than the machine is run on. In other words, how do we create a more efficient and energy conserving machine that fits in the palm of your hand.

Furthermore, I researched UCSB’s studies of organic semi-conducting and light harvesting material. Organic semiconductors, literally, are organic materials with semiconducting properties. There are many advantages to produce devices using such technology, including affordability, and more efficient energy usage. Light harvesting materials act within this same way. They use minimal amounts of trace material in order to produce mass amounts of energy. In some specific cases, researchers are using technology based on solar energy in order to create windows using transparent solar cells. The idea behind the transparent solar panel is one that I have specifically used within my project. The material that creates these solar panel is a type of thin film, composed of a semiconducting polymer that forms a soccer-ball-shaped molecule pattern. When applied to a surface, the this material self-assembles in a repeating pattern of micron-sized hexagonal-shaped cells (resembling a honeycomb over a relatively large area.) Yet, this polymer is translucent because the polymer chains only 'clump' together at the edges of each hexagon-shaped molecule and remain thin across the centers. The densely packed edges strongly absorb light and could facilitate electrical conductivity, although from the naked eye, the material itself appears to be clear. The goal of this research is to develop new, energy efficient materials to be implemented into commercial use.

From all of this information, I based my project off of a very basic concept that combined both quantum computers and light harvesting materials. Simplistically, a quantum of energy is a specific amount of energy. Light sources such as lasers emit light in units called photons. Now, since light is a form of energy that creates wave (hence the phrase ‘wavelengths’ of light), photons are then particles that carry a certain amount, or 'quantum', of energy depending on its’ wavelength.

Taking these simplistic ideas I generated the following idea for a gallery presentation: The gallery will be empty and dimly lit with the exception of one skylight in the ceiling. Using a translucent solar pane to cover the floor, I will collect solar energy through the skylight in the gallery. From my research, I discovered that thinner solar cells retain the absorption like a thicker device, and have a higher voltage. Thus, the thin solar panel will be able to harvest a high voltage light, i.e. a dim sort of laser. Connected to the solar panel will be a transmitter that will harvest this energy and send it to a projector attached to the ceiling of the exhibit. Inside the projector, a quantum computer will be ‘reading’ the energy signals and simultaneously emitting photons. From the projector then, this laser (created from the solar-harvested sunlight) will point directly at one of four silicon-canvases placed on opposite walls of the exhibit.

From my previous research, I concluded that silicon is semi-reactant material to the laser, and thus would illustrate the most movement from the photons that are transmitted upon it. Thus, the laser will serve to activate the silicon’s photons, and map the photons specific movements across the first canvas. Although technically the laser’s effects are only easily observable at both the macroscopic level, I will be assuming that these small movements and patterns of photos can be visible to the naked eye (for the sake of the audience being able to witness these events within a gallery space.)

This brings me to the core artistic concept. By channeling energy at high velocities across the first canvas, this canvas will be reactant and emit the laser off onto the next canvas. The idea behind this photon movement is called quantum teleportation. When photons becomes entangled (entanglement means that the properties of the photons, such as their polarization, are much more strongly correlated than is possible in classical physics), and then a laser is used to fire one of the photos, it is possible to transmit the laser back and forth from canvas to canvas. This is because when the quantum state of one photon is altered, the quantum state of the second photon is immediately altered, faster than the speed of light. In essence, each canvas would read the mind of the last, creating a dazzling display of photon waves as the laser navigates to each canvas.

SEE IT WORK (the video simulation I have made):

Interestingly, I decided to use the skylight and solar panel as part of this project because, depending on time of day, the emitting light from the laser and the photon arrangement will be of varying degrees. This means that at any given time of the day, the patterns displayed on each of the canvases are in constant change. For example, imagine if a cloud were to momentarily block the direct sunlight onto the solar panel. This would diminish and/or weaken the laser, causing there to be less photons projected onto each canvas and the simultaneous pattern of the photons to change. (I originally derived this idea based on work by James Turrell who uses skylights and light manipulation within his exhibits.)

Hence, my idea for this project spans beyond merely introducing a scientific medium into the art gallery. It has been an issue through my readings and research of creating work that is sustainable in the future. By using light harvested materials to power computers (whether that be quantum computers as I have suggested, or convention computers for the time being) we are creating a respective full-circle of energy saving: By using light harvesting materials, we obtain more energy from the sun than is used to operate the solar panel. If this light is then channeled to powering computer operating systems, we cut out the need of electrical power circuit systems. More so, if devices such as the quantum computer also output more energy than is input, then we are generating a high velocity of power without the negative effects of electrical energy consumption. Likewise, with the advancements in quantum teleportation, we would be able to run all of our computers off of one generated energy source, sending energy out across space to billions of computers worldwide. While this seems far-fetched, introducing these sciences to the public in an understandable way would allow for the public to be informed and involved in these energy saving options.

Photo links: in order shown

http://www.popsci.com/science/article/2 ... m-computer

http://www.redorbit.com/news/technology ... ep-closer/

http://www.gizmag.com/sphelar-cells-are ... re/112733/

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://qis.ucalgary.ca/QO/wolfgang.jpg

http://themindlesseye.blogspot.com/2010 ... space.html

Information sources :(not in order)

http://www.csqc.ucsb.edu/index.html

http://michaelnielsen.org/blog/quantum- ... -everyone/

http://en.wikipedia.org/wiki/Spintronics

http://www.scottaaronson.com/democritus/lec10.html

https://docs.google.com/viewer?a=v&q=ca ... PtvmGYLMZA

http://physics.usask.ca/~chang/homepage ... r_cell.gif

http://www.gizmag.com/light-harvesting- ... ilm/16819/

http://en.wikipedia.org/wiki/Organic_semiconductor

http://www.cpos.ucsb.edu/research/materials/

http://www.nature.com/nnano/focus/organics/index.html

http://physics.usask.ca/~chang/homepage ... ganic.html

http://www.pveducation.org/pvcdrom/desi ... t-trapping

http://news.discovery.com/tech/solar-po ... 10919.html

http://en.wikipedia.org/wiki/Photon

http://simple.wikipedia.org/wiki/Wave-particle_duality

http://www.zamandayolculuk.com/cetinbal ... EPORTb.htm

http://qis.ucalgary.ca/QO/wolfgang.jpg

Last edited by hcboydstun on Mon Dec 03, 2012 9:30 pm, edited 1 time in total.

Re: Wk10 - Final Project presentation Here

WHERE IS REAL? - performance/installation

For our project we (Erik and Giovanni) are working with the Four Eyes Lab for an Augmented Reality experience that will blur the lines of our “objective” physical reality. This project will give you a controlled out-of-body experience.

Viewers in the museum space will wear AR glasses that will visually transmit data via GPS satellite to their eyes, making them see the localized surroundings from Times Square in New York City.

Augmented reality (AR) defined as "a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data."

AR is related to the concept of mediated reality that indicate the interactions with the real environment in which the perspective is modified by a computer.

AR is contrasting virtual reality, where the environment is entirely virtual and replaces the real world, is usually in real-time and in semantic context with environmental elements, and gives the possibility to overlay artificial informations on the real world.

Ar as many areas of applications like: archaeology, architecture, art, commerce, education, industrial design, medical, military workplace, sports & entertainment, tourism, support (like in assembly, maintenance, surgery, translation etc).

In addition to Augmented Reality, we are also using the Four Eyes Lab’s research in “Wide-area Mobile Localization from Panoramic Imagery”. This is a system in which a camera can more easily capture panoramic images and track movement in real time. Normally AR requires “registration markers” such as QR codes you can find in magazines or in other forms of advertisement. Four Eyes Lab work in “Anywhere Augmentation” is designed to form Augmented Reality anywhere without the use of a QR code or other registration marker via the use of GPS Satellite or tracking.

1. How will the work be situated, which space

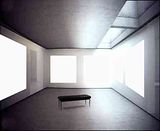

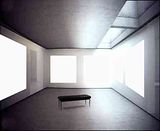

For our performance/installation we will situated in a vacant room in the art museum.

It is key that the room be empty because Augmented Reality visualization will take form from "nothing".

"Ex nihlo"!!!

We will add white wall paneling to make the room domed, for a more immersive panoramic view of our other location.

The second space that will be situated in Times Square, New York City. This space which we have chosen due to his large quantity of people at any given time, will be reproduced in the Augmented Reality space in the museum. Our installation here will include a large glass wall into which the AR will be seen. These two spaces will interact despite being nowhere near each other.

2. How will it be represented, physical presence, monitors, some direct connection to the lab, etc.

Using a wide area panoramic camera and a GPS satellite the image taken by a panoramic camera , will be projected in the AR space in the form of the localized area in New York.

It will be like the viewer/user in the museum has been transported to New York and vice versa.

3. What are the key components to making the project understandable

You need some things; the AR data, a tracking system, an interface onto which the AR will be displayed, and reality.

The panoramic camera will capture the images in New York and using advanced motion and tracking systems, capture the depth of three-dimensional objects such as nearby people- and project those forms as three-dimensional shapes in the AR space that will map over the physical world.

4. What is the "story", or context?

Our main concept is that in the future it will be difficult to distinguish the boundaries of reality and “mixed reality”, the world where digital and physical collapse into each other.

To do a really simple example, if you want partecipate in live event, like a sport match or a concert or

if you have a business meeting where you have to meet in a meeting room, you would no longer have be at that specific location- you could work from the comfort of your own home and no one might even know you aren’t actually right there.

This performance want make you think on how the concept of space and time is changing with the use of new technologies like AR, in the future it will be not be necessary tp be in the real place in a specified moment to directly interact with people or object wha are actually in that space, is like a teleport or ubiquity.

To make it clear, our project will have our two installation spots interacting with each other in the form of a simple game, where on screen icons will appear that can be moved around directly from one of the two locations.

Think of Pong.

Two paddles, with a person in New York controlling one and a person in Santa Barbara controlling the other. They can toss a ball back and forth at each other like they where in the same place at the same time, and it will not possible anymore to understand where is the interaction or answer the question:

where is real?

---------------------------------------------------------------

CLICK TO WATCH THE DEMO!!!

http://www.youtube.com/watch?v=i-RNLUTg ... e=youtu.be

---------------------------------------------------------------

http://en.wikipedia.org/wiki/Augmented_reality

http://ilab.cs.ucsb.edu/

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/28

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/99

http://ilab.cs.ucsb.edu/index.php/compo ... cle/10/144

Pictures resources:

- http://www.symetrix.co/sound-associates ... ay-babies/

- http://www.businessinsider.com/best-aug ... 011-3?op=1

- http://www.news-24h.it/2012/06/nexus-7- ... tere-puro/

For our project we (Erik and Giovanni) are working with the Four Eyes Lab for an Augmented Reality experience that will blur the lines of our “objective” physical reality. This project will give you a controlled out-of-body experience.

Viewers in the museum space will wear AR glasses that will visually transmit data via GPS satellite to their eyes, making them see the localized surroundings from Times Square in New York City.

Augmented reality (AR) defined as "a live, direct or indirect, view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data."

AR is related to the concept of mediated reality that indicate the interactions with the real environment in which the perspective is modified by a computer.

AR is contrasting virtual reality, where the environment is entirely virtual and replaces the real world, is usually in real-time and in semantic context with environmental elements, and gives the possibility to overlay artificial informations on the real world.

Ar as many areas of applications like: archaeology, architecture, art, commerce, education, industrial design, medical, military workplace, sports & entertainment, tourism, support (like in assembly, maintenance, surgery, translation etc).

In addition to Augmented Reality, we are also using the Four Eyes Lab’s research in “Wide-area Mobile Localization from Panoramic Imagery”. This is a system in which a camera can more easily capture panoramic images and track movement in real time. Normally AR requires “registration markers” such as QR codes you can find in magazines or in other forms of advertisement. Four Eyes Lab work in “Anywhere Augmentation” is designed to form Augmented Reality anywhere without the use of a QR code or other registration marker via the use of GPS Satellite or tracking.

1. How will the work be situated, which space

For our performance/installation we will situated in a vacant room in the art museum.

It is key that the room be empty because Augmented Reality visualization will take form from "nothing".

"Ex nihlo"!!!

We will add white wall paneling to make the room domed, for a more immersive panoramic view of our other location.

The second space that will be situated in Times Square, New York City. This space which we have chosen due to his large quantity of people at any given time, will be reproduced in the Augmented Reality space in the museum. Our installation here will include a large glass wall into which the AR will be seen. These two spaces will interact despite being nowhere near each other.

2. How will it be represented, physical presence, monitors, some direct connection to the lab, etc.

Using a wide area panoramic camera and a GPS satellite the image taken by a panoramic camera , will be projected in the AR space in the form of the localized area in New York.

It will be like the viewer/user in the museum has been transported to New York and vice versa.

3. What are the key components to making the project understandable

You need some things; the AR data, a tracking system, an interface onto which the AR will be displayed, and reality.

The panoramic camera will capture the images in New York and using advanced motion and tracking systems, capture the depth of three-dimensional objects such as nearby people- and project those forms as three-dimensional shapes in the AR space that will map over the physical world.

4. What is the "story", or context?

Our main concept is that in the future it will be difficult to distinguish the boundaries of reality and “mixed reality”, the world where digital and physical collapse into each other.

To do a really simple example, if you want partecipate in live event, like a sport match or a concert or

if you have a business meeting where you have to meet in a meeting room, you would no longer have be at that specific location- you could work from the comfort of your own home and no one might even know you aren’t actually right there.

This performance want make you think on how the concept of space and time is changing with the use of new technologies like AR, in the future it will be not be necessary tp be in the real place in a specified moment to directly interact with people or object wha are actually in that space, is like a teleport or ubiquity.

To make it clear, our project will have our two installation spots interacting with each other in the form of a simple game, where on screen icons will appear that can be moved around directly from one of the two locations.

Think of Pong.

Two paddles, with a person in New York controlling one and a person in Santa Barbara controlling the other. They can toss a ball back and forth at each other like they where in the same place at the same time, and it will not possible anymore to understand where is the interaction or answer the question:

where is real?

---------------------------------------------------------------

CLICK TO WATCH THE DEMO!!!

http://www.youtube.com/watch?v=i-RNLUTg ... e=youtu.be

---------------------------------------------------------------

http://en.wikipedia.org/wiki/Augmented_reality

http://ilab.cs.ucsb.edu/

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/28

http://ilab.cs.ucsb.edu/index.php/compo ... icle/10/99

http://ilab.cs.ucsb.edu/index.php/compo ... cle/10/144

Pictures resources:

- http://www.symetrix.co/sound-associates ... ay-babies/

- http://www.businessinsider.com/best-aug ... 011-3?op=1

- http://www.news-24h.it/2012/06/nexus-7- ... tere-puro/

Last edited by giovanni on Thu Dec 06, 2012 9:10 am, edited 10 times in total.

-

erikshalat

- Posts: 9

- Joined: Mon Oct 01, 2012 3:10 pm

Re: Wk10 - Final Project presentation Here

[see Giovanni's Post]

Last edited by erikshalat on Thu Dec 06, 2012 9:02 am, edited 5 times in total.

3D Video Chat

Working with Ashley Fong

In the Electrical and Computer Engineering department at UCSB, Stephen Mangiat and Jerry Gibson have been working on Disparity Remapping for Handheld 3D Video Communications. Their goal is to enhance mobile video-conferencing into 3D perception.

With our mind’s ability visualize and expect curvatures and facial structures it allows for the transition of creating 3D imaging easier. Most 3D displays require glasses, which should become obsolete with the development of this new age in technology of autostereoscopic, automatic 3D imaging. Difficulties facing this imaging is the comfort zone of the observer’s eyes and 3D perception. Pupils adjust to focus light from the depth they are in. 3D imaging is broken as the person has to focus on the light at the distance of the display. This disconnection causes discomfort and fatigue.

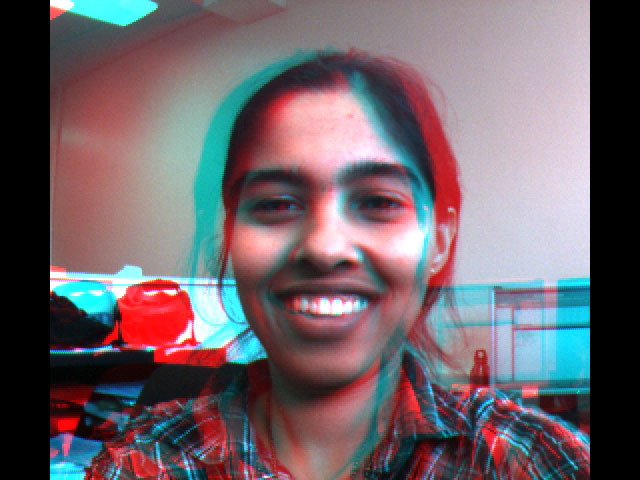

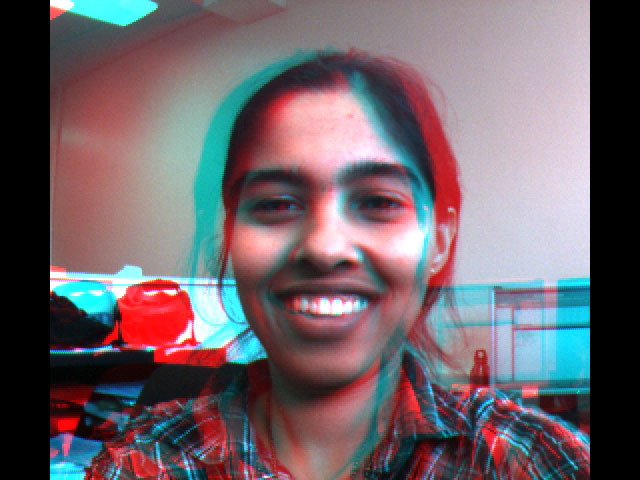

{Shifted Frames}

To avoid discomfort the stereo baseline (the distance of the two points where the two images converge; the layering of the blue and red images) which would reduce the 3D effect. When the baseline is reduced the effect of 3D is changed into a 2D one.

Video quality is dependent upon the users’ camera/webcam and screen resolution. The information processed to create the 3D image can cause the brain to be unable to perceive lines of the window edges that are missing, causing the brain to be confused. In a 2d perspective, objects at a farther distance/depth will appear in the same location. It’s then essential to capture the objects in the back to appear in front so images can be shifted in post-processing.

{Shifted Frames with adjustments}

Four our project we want to maintain viewer comfort while upholding the 3D vision. Viewers will have the chance to be seen and see others in stereoscopy. The key is to communicate with others from across the country as long as the time zones overlap. The immersion of art and the spectator is important because peoples’ interaction with gallery art is limited as they feel apathetic to it. There is this social barrier where people feel that art belongs to only a certain class and taste but in reality it belongs and can be spectated by all. This 3D video communication will allow everyone who walks into the gallery to communicate with someone elsewhere. One user can be in the LA gallery and talking to a user in a NY space. People will then be exposed to different cultures.

The exhibit space will consist of tablets like iPads adjustably mounted on the wall for the ability to change depending on the viewer’s eye level. The space will be open to all ages because there is no limit to the exploration of new places, people and cultures with 3D imaging. Sections of the galleries will be placed by wide windows to accommodate a fully immersed experience. The person will not only be able to see the user but as well as see the surrounding environment. The scenery would consist of bustling people walking down the street or the skyline if the gallery was set up high. The users will be able to sign in their locations and see who else is online in galleries all over the globe. They can choose who they want to talk to while learning about them from their side of the world. The choice of talking or typing will be given to the user for their own personal reasons.

Some users might not be willing to talk to a stranger but they still want to experience this new innovative 3D space. Also at times a person might not be able to talk to someone due to space availability so camera’s will be placed outside certain parts of the galleries wirelessly streaming to other non-communicating tablets. This will allow other people to see the 3D aspect while still peeking into another part of the globe’s lifestyle.

Futuristically we want this 3D vision to capture multiple viewers in 3D as oppose to just one. Language barriers will be broken by real time subtitles including more accurate translations. Also we want people to see where the technology is heading and this is a good way to show them. This technology is going to help gateway into understanding how to make multidementional ways of conversation which includes creating holograms. Eventually, we want to be able to see people in a 360 degree view while chatting with them over any device.

If a tablet can draw in viewers then it’s not farfetched that a large screen can project in real-time life sized figures as if the observer is actually there in NY, Seoul, Beijing or Dubai.

http://vivonets.ece.ucsb.edu/handheld3d.html

http://vivonets.ece.ucsb.edu/mangiat_espa.pdf

In the Electrical and Computer Engineering department at UCSB, Stephen Mangiat and Jerry Gibson have been working on Disparity Remapping for Handheld 3D Video Communications. Their goal is to enhance mobile video-conferencing into 3D perception.

With our mind’s ability visualize and expect curvatures and facial structures it allows for the transition of creating 3D imaging easier. Most 3D displays require glasses, which should become obsolete with the development of this new age in technology of autostereoscopic, automatic 3D imaging. Difficulties facing this imaging is the comfort zone of the observer’s eyes and 3D perception. Pupils adjust to focus light from the depth they are in. 3D imaging is broken as the person has to focus on the light at the distance of the display. This disconnection causes discomfort and fatigue.

{Shifted Frames}

To avoid discomfort the stereo baseline (the distance of the two points where the two images converge; the layering of the blue and red images) which would reduce the 3D effect. When the baseline is reduced the effect of 3D is changed into a 2D one.

Video quality is dependent upon the users’ camera/webcam and screen resolution. The information processed to create the 3D image can cause the brain to be unable to perceive lines of the window edges that are missing, causing the brain to be confused. In a 2d perspective, objects at a farther distance/depth will appear in the same location. It’s then essential to capture the objects in the back to appear in front so images can be shifted in post-processing.

{Shifted Frames with adjustments}

Four our project we want to maintain viewer comfort while upholding the 3D vision. Viewers will have the chance to be seen and see others in stereoscopy. The key is to communicate with others from across the country as long as the time zones overlap. The immersion of art and the spectator is important because peoples’ interaction with gallery art is limited as they feel apathetic to it. There is this social barrier where people feel that art belongs to only a certain class and taste but in reality it belongs and can be spectated by all. This 3D video communication will allow everyone who walks into the gallery to communicate with someone elsewhere. One user can be in the LA gallery and talking to a user in a NY space. People will then be exposed to different cultures.

The exhibit space will consist of tablets like iPads adjustably mounted on the wall for the ability to change depending on the viewer’s eye level. The space will be open to all ages because there is no limit to the exploration of new places, people and cultures with 3D imaging. Sections of the galleries will be placed by wide windows to accommodate a fully immersed experience. The person will not only be able to see the user but as well as see the surrounding environment. The scenery would consist of bustling people walking down the street or the skyline if the gallery was set up high. The users will be able to sign in their locations and see who else is online in galleries all over the globe. They can choose who they want to talk to while learning about them from their side of the world. The choice of talking or typing will be given to the user for their own personal reasons.

Some users might not be willing to talk to a stranger but they still want to experience this new innovative 3D space. Also at times a person might not be able to talk to someone due to space availability so camera’s will be placed outside certain parts of the galleries wirelessly streaming to other non-communicating tablets. This will allow other people to see the 3D aspect while still peeking into another part of the globe’s lifestyle.

Futuristically we want this 3D vision to capture multiple viewers in 3D as oppose to just one. Language barriers will be broken by real time subtitles including more accurate translations. Also we want people to see where the technology is heading and this is a good way to show them. This technology is going to help gateway into understanding how to make multidementional ways of conversation which includes creating holograms. Eventually, we want to be able to see people in a 360 degree view while chatting with them over any device.

If a tablet can draw in viewers then it’s not farfetched that a large screen can project in real-time life sized figures as if the observer is actually there in NY, Seoul, Beijing or Dubai.

http://vivonets.ece.ucsb.edu/handheld3d.html

http://vivonets.ece.ucsb.edu/mangiat_espa.pdf

-

juliacurtis

- Posts: 8

- Joined: Mon Oct 01, 2012 3:56 pm

Re: Wk10 - Final Project presentation Here

For the final project I will be combining two departments of research at UCLA. Research being doing at the Jonsson Comprehensive Cancer Center and the Molecular Visualization Laboratory the Department of Biological Chemistry has as a resource.

"The Molecular Visualization Laboratory (MVL) is used for research and teaching purposes. It is designed for viewing digital representations of complex molecular structures in a stereo (3-D) environment."(7)

Often times, people fighting a deathly disease such as cancer, have diminished chances of winning the battle because they weren't diagnosed early enough in the process. Clearly this is a very important area that is in need of improved molecular imaging abilities. My intention with this project will be to take work going on at the Molecular Visualization Laboratory and the Jonsson Comprehensive Cancer Center, and explore the principles and areas of applicability for molecular imaging, then to propose an art museum exhibit that will raise awareness and knowledge of research. In other words, I will be delving into the problems causing the need for more research, research like the above two departments at UCLA, and creating a better understanding and exposure to the principles they deal with. Ultimately I would like my exhibit to raise awareness and create a connection between the public and the medical research community.

In the cancer molecular imaging program area at the Jonsson Center, Direct Dr. Anna Wu, and Assistant Direct Dr. Johannes Czernin, "[seek] to use molecular imaging to study cancer in living organisms, first in laboratory models and ultimately, in people. Molecular imaging allows non-invasive visualization of key molecules, processes, and events and allows a window into the changes that occur when cancer develops." (1)

Molecular imaging "enables the visualization of the cellular function and the follow-up of the molecular process in living organisms without perturbing them." (2) Unlike with traditional imaging, probes known as biomarkers are used, which interact chemically to allow an image to display the existence of certain molecules and their movements. "Much research is currently centered around detecting what is known at a predisease state or molecular state that occur before typical symptoms of a disease are detected." (2) In optical imaging, flourescence bioluminescence, absorption or reflectance provide the various sources of contrast. Infrared dye-labeled probes used after being developed to identify and react as desired in the body pull a molecular moment of time onto a paper in the form of an image.

Their diverse interests range form identifying irregularities and how to apply new developments throughout the process of diagnosing and treating cancer, to developing new molecular imaging techniques.

A link to their separate website provides more information as well as several youtube videos surrounding the topic:

http://www.cancer.ucla.edu/Index.aspx?page=131

http://www.biolchem.ucla.edu/Resources_ ... ratory.htm

Molecular Imaging Technology at UCLA’s Jonsson Cancer Center

http://www.youtube.com/watch?feature=pl ... XaPct38fdw

Patient Advocacy Session: Molecular Imaging and Heart Disease

http://www.youtube.com/watch?feature=pl ... r-Ga_WSgg4

Molecular Imaging Coming of Age with New Strategies in Both PET and MRI

http://www.youtube.com/watch?feature=pl ... I_thWGSIhA

"Molecular imaging is being hailed the next great advance for imaging." (4) It is itself a translation enabled through advances in technology. Continuing this research and raising awareness for its needs by putting it in peoples' lives and contextualizing it.

The following is a description of the technology at the molecular visualization lab: "The MVL is equipped with a 3-D projection system, utilizing "active stereo" technology. A ceiling-mounted projector with fast phosphor tubes rapidly projects alternating images, one for the right eye and one for the left. These images are displayed at approximately twice the normal refresh rate. Using specially-designed glasses, equipped with two infra-red controlled LCD shutters working in synchronization with the projector, the audience is "tricked" into seeing a full 3-D image. The system provides excellent image quality unattainable by other methods. Movable images of molecular structures are fed into the projector in real time thanks to a powerful SGI Octane workstation. The MVL is able to accommodate about 15 people simultaneously." (7)

Here we can see that the technology necessary for visualizing the molecular level is immense and I'm not sure whether a temporary installation would be possible. Regardless, the necessity of using more social and artistic mediums to include more of the public in current medical and scientific going abouts exists and that is where my art proposal comes into play.

The story? We all have one. We all have someone who has brought current deadly medical diseases and their impacts into our lives. The context. Why? We need a better understanding of diseases like cancer. Too much attention and money goes towards research for pharmaceuticals or pharmaceutical companies themselves. We need to raise awareness and place more pressure on researchers to direct their aims to an understanding of the causes and life-cycles of the cells and processes that are killing people ever day.

This exhibit would be towards the back of the museum and it would need to have one clear opening for entrance and one clear opening for the exit. In other words the exhibit would be something you walk through. The room would ideally be rectangular, somewhere around 15 x 20 (ft^2) in dimension. There would be five to seven podiums each with their own 3d visualization regarding currently discovered facts and common diseases. This would bring a visual understanding of the molecular level, something occurring all the time in each and every one of us, and increase the public knowledge regarding processes and interactions that medical researches are currently studying and learning more about. The exhibit would also be interactive in the sense that the visualization will only appear when someone stands within a four foot circumference of each podium.

Art and Science, In All Of Us.

So, what will the experience be?

The lights will be somewhat dimmed to allow the 3D visuals to appear more brightly once the podiums they are sitting on are approached. Viewers will walk through the center aisle of the room with the podiums scattered on each side. As one walks up to the podium the visualizations will appear and a written description of the process being shown will also be attached to each of the podiums.

Since the feasibility of getting this kind of technology in a museum right now is no guarantee, However, I would like processes such as cells multiplying and new forms of cancer treatment being demonstrated and pathways being shown. Research yielding new information on medical insights as well as advances regarding molecular technology will be provided.

For example:

“The new method of 4D PET image reconstruction works by taking data from specific points—almost like taking individual frames of a film reel—when patients are taking air into their lungs or when blood is being forced by a contraction of their heart muscle. Where other diagnostic imaging procedures—such as X-rays, computed tomography and ultrasound—offer predominately anatomical pictures, PET allows physicians to see how the heart is functioning. The visual representation of this functional information can be further enhanced with image reconstruction techniques such as this one, which uses quantitative image data and a special algorithm that transforms the original image into a crystal-clear 4D image that has none of the hazy areas ordinarily caused by the rhythmic movements of the heart and lungs.” (6)

Although the following image is 2D, I would like something along these lines to be demonstrated three-dimensionally on a podium.

The components for making sure the context is understand? Upon entering the exhibit, there will be a display showing the current rates people are dying of the top deadly diseases as well a similar image to the one above describing the way molecular imaging works. Then, each podium will provide the information regarding its' visualization.

Lastly, before exiting the room, there will be blank canvases and markers where any one with the desire can write the name of a loved one lost to a disease or currently fighting one. The point of the exhibit will be to connect science with art and the public with the people researching the complex workings of the human molecular level.

Sources:

(1) http://www.cancer.ucla.edu/Index.aspx?page=131

(2) http://en.wikipedia.org/wiki/Molecular_imaging

(3) http://www.dyomics.com/in-vivo-imaging.html

(4) http://radiology.rsna.org/content/244/1/39.full

(5) http://clincancerres.aacrjournals.org/c ... /2125.full

(6) http://www.quantumday.com/2012/06/molec ... -into.html

(7) http://www.biolchem.ucla.edu/Resources_ ... ratory.htm

"The Molecular Visualization Laboratory (MVL) is used for research and teaching purposes. It is designed for viewing digital representations of complex molecular structures in a stereo (3-D) environment."(7)

Often times, people fighting a deathly disease such as cancer, have diminished chances of winning the battle because they weren't diagnosed early enough in the process. Clearly this is a very important area that is in need of improved molecular imaging abilities. My intention with this project will be to take work going on at the Molecular Visualization Laboratory and the Jonsson Comprehensive Cancer Center, and explore the principles and areas of applicability for molecular imaging, then to propose an art museum exhibit that will raise awareness and knowledge of research. In other words, I will be delving into the problems causing the need for more research, research like the above two departments at UCLA, and creating a better understanding and exposure to the principles they deal with. Ultimately I would like my exhibit to raise awareness and create a connection between the public and the medical research community.

In the cancer molecular imaging program area at the Jonsson Center, Direct Dr. Anna Wu, and Assistant Direct Dr. Johannes Czernin, "[seek] to use molecular imaging to study cancer in living organisms, first in laboratory models and ultimately, in people. Molecular imaging allows non-invasive visualization of key molecules, processes, and events and allows a window into the changes that occur when cancer develops." (1)

Molecular imaging "enables the visualization of the cellular function and the follow-up of the molecular process in living organisms without perturbing them." (2) Unlike with traditional imaging, probes known as biomarkers are used, which interact chemically to allow an image to display the existence of certain molecules and their movements. "Much research is currently centered around detecting what is known at a predisease state or molecular state that occur before typical symptoms of a disease are detected." (2) In optical imaging, flourescence bioluminescence, absorption or reflectance provide the various sources of contrast. Infrared dye-labeled probes used after being developed to identify and react as desired in the body pull a molecular moment of time onto a paper in the form of an image.

Their diverse interests range form identifying irregularities and how to apply new developments throughout the process of diagnosing and treating cancer, to developing new molecular imaging techniques.

A link to their separate website provides more information as well as several youtube videos surrounding the topic:

http://www.cancer.ucla.edu/Index.aspx?page=131

http://www.biolchem.ucla.edu/Resources_ ... ratory.htm

Molecular Imaging Technology at UCLA’s Jonsson Cancer Center

http://www.youtube.com/watch?feature=pl ... XaPct38fdw

Patient Advocacy Session: Molecular Imaging and Heart Disease

http://www.youtube.com/watch?feature=pl ... r-Ga_WSgg4

Molecular Imaging Coming of Age with New Strategies in Both PET and MRI

http://www.youtube.com/watch?feature=pl ... I_thWGSIhA

"Molecular imaging is being hailed the next great advance for imaging." (4) It is itself a translation enabled through advances in technology. Continuing this research and raising awareness for its needs by putting it in peoples' lives and contextualizing it.

The following is a description of the technology at the molecular visualization lab: "The MVL is equipped with a 3-D projection system, utilizing "active stereo" technology. A ceiling-mounted projector with fast phosphor tubes rapidly projects alternating images, one for the right eye and one for the left. These images are displayed at approximately twice the normal refresh rate. Using specially-designed glasses, equipped with two infra-red controlled LCD shutters working in synchronization with the projector, the audience is "tricked" into seeing a full 3-D image. The system provides excellent image quality unattainable by other methods. Movable images of molecular structures are fed into the projector in real time thanks to a powerful SGI Octane workstation. The MVL is able to accommodate about 15 people simultaneously." (7)

Here we can see that the technology necessary for visualizing the molecular level is immense and I'm not sure whether a temporary installation would be possible. Regardless, the necessity of using more social and artistic mediums to include more of the public in current medical and scientific going abouts exists and that is where my art proposal comes into play.

The story? We all have one. We all have someone who has brought current deadly medical diseases and their impacts into our lives. The context. Why? We need a better understanding of diseases like cancer. Too much attention and money goes towards research for pharmaceuticals or pharmaceutical companies themselves. We need to raise awareness and place more pressure on researchers to direct their aims to an understanding of the causes and life-cycles of the cells and processes that are killing people ever day.

This exhibit would be towards the back of the museum and it would need to have one clear opening for entrance and one clear opening for the exit. In other words the exhibit would be something you walk through. The room would ideally be rectangular, somewhere around 15 x 20 (ft^2) in dimension. There would be five to seven podiums each with their own 3d visualization regarding currently discovered facts and common diseases. This would bring a visual understanding of the molecular level, something occurring all the time in each and every one of us, and increase the public knowledge regarding processes and interactions that medical researches are currently studying and learning more about. The exhibit would also be interactive in the sense that the visualization will only appear when someone stands within a four foot circumference of each podium.

Art and Science, In All Of Us.

So, what will the experience be?

The lights will be somewhat dimmed to allow the 3D visuals to appear more brightly once the podiums they are sitting on are approached. Viewers will walk through the center aisle of the room with the podiums scattered on each side. As one walks up to the podium the visualizations will appear and a written description of the process being shown will also be attached to each of the podiums.

Since the feasibility of getting this kind of technology in a museum right now is no guarantee, However, I would like processes such as cells multiplying and new forms of cancer treatment being demonstrated and pathways being shown. Research yielding new information on medical insights as well as advances regarding molecular technology will be provided.

For example:

“The new method of 4D PET image reconstruction works by taking data from specific points—almost like taking individual frames of a film reel—when patients are taking air into their lungs or when blood is being forced by a contraction of their heart muscle. Where other diagnostic imaging procedures—such as X-rays, computed tomography and ultrasound—offer predominately anatomical pictures, PET allows physicians to see how the heart is functioning. The visual representation of this functional information can be further enhanced with image reconstruction techniques such as this one, which uses quantitative image data and a special algorithm that transforms the original image into a crystal-clear 4D image that has none of the hazy areas ordinarily caused by the rhythmic movements of the heart and lungs.” (6)

Although the following image is 2D, I would like something along these lines to be demonstrated three-dimensionally on a podium.

The components for making sure the context is understand? Upon entering the exhibit, there will be a display showing the current rates people are dying of the top deadly diseases as well a similar image to the one above describing the way molecular imaging works. Then, each podium will provide the information regarding its' visualization.

Lastly, before exiting the room, there will be blank canvases and markers where any one with the desire can write the name of a loved one lost to a disease or currently fighting one. The point of the exhibit will be to connect science with art and the public with the people researching the complex workings of the human molecular level.

Sources:

(1) http://www.cancer.ucla.edu/Index.aspx?page=131

(2) http://en.wikipedia.org/wiki/Molecular_imaging

(3) http://www.dyomics.com/in-vivo-imaging.html

(4) http://radiology.rsna.org/content/244/1/39.full

(5) http://clincancerres.aacrjournals.org/c ... /2125.full

(6) http://www.quantumday.com/2012/06/molec ... -into.html

(7) http://www.biolchem.ucla.edu/Resources_ ... ratory.htm

Last edited by juliacurtis on Thu Dec 06, 2012 8:15 am, edited 1 time in total.

Re: Wk10 - Final Project presentation Here

Title: Becoming Art

Description: My exhibit will use 3D LiDAR vision technology to capture photos of the museum goers. I will then juxtapose the photos of the viewers with photos of well-known artworks/landscapes in an effort to coerce viewers to become active participants of the art, and not just passive stand by-ers.

Vision Technology Researched:

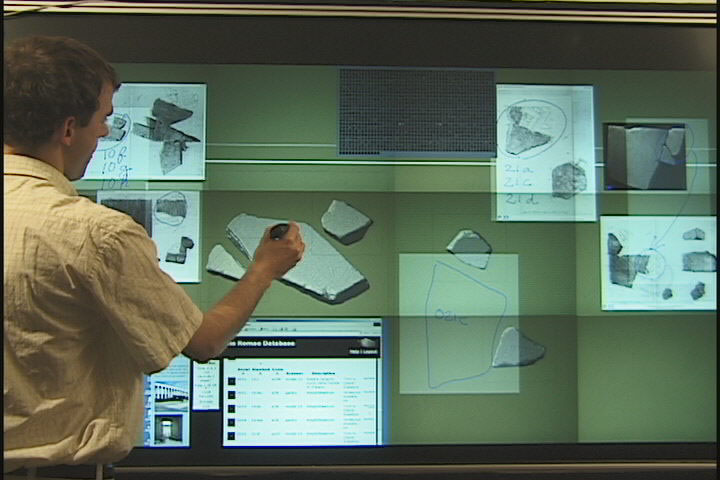

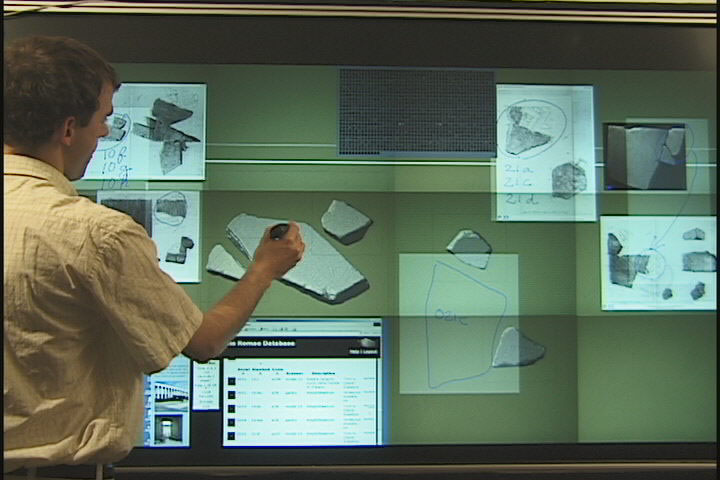

My final project will focus on 3D LiDAR vision technology. LiDAR (Light Detection and Ranging) uses remote sensing technology to illuminate a desired subject with pulsing light from a laser. Data that is collected from this remote sensing is then used to create a composite image. Though LiDAR technology is not currently being used at UCSB, it is being used at Santa Barbara’s very own Raytheon Corporation. Raytheon has produced a range of 3D Flash LiDAR cameras, their most recent one being TigerEye. 3D LiDAR technology captures X, Y, and Z coordinates to create accurate three dimensional visualization. This unique process allows people to manipulate an image in ways that 2D images cannot be manipulated (i.e stripping layers of the image, see second youtube video). The LiDAR combines GPS tracking, remote sensing, laser imaging, and Inertial Measurement Units (IMU) to create accurate geospatial visualizations of information. 3D LiDAR technology is used across different fields such as archaeology, architecture, physics, and even in the military, however, for the purposes of this project I will use the LiDAR for artistic means and purposes.

LiDAR imaging's normal application

Translation into Art/Museum Presentation:

For my art exhibition, I am proposing an interactive exhibit where viewers will become participators that can manipulate the technology and image of their choosing for themselves. Participators will have the option of having their photo taken by a range of 3D LiDAR cameras, upon which the 3D visualization will be projected onto a photo of a realistic landscape of their choosing (via a computer that will be available for use). If the participators do not wish to have their photo taken, a model will be on standby to keep the presentation continuous. Then, the viewer will be allowed to manipulate variables of their portrait such as color, size, and layers and once they are finished, the image will be stored as a part of a series on a web database. There will be two stations, one for people to get photographed in and another for the manipulation process. Meanwhile, the portraits will be projected against a wall in a museum. Art exhibits are normally about the viewer and the artwork(s) that are being viewed. There is a separation that is apparent between the viewer and the viewed, as well as what is real (the viewer) and what is not(the artwork). By projecting a 3D LiDAR image of the viewers on to realistic backgrounds (i.e. a photo of the Eiffel tower or a well known painting), this separation will become reversed. Instead, the viewer will become a part of the art installation and there will be a reversal of what is considered real and what is not, as well as forced to become an active viewer. This exhibit will also challenge viewers to think about voyeurism, and will place them in the position of both the viewed and the viewer.

example of what people look like when thier photos are taken by a 3D LiDAR camera.

http://www.youtube.com/watch?v=Tc9c2k2eZ-4

another example, differences in color

imagine juxtaposed over a well-known, relatively flat image (below) and projected for the rest of the viewers to see/to create one composite image.

In terms of space, the exhibit will be in the main room of the museum. I will set up the photo booth and the photo-manipulation booth on one side of the museum, while a projector will be placed on the ceiling and projecting and live broadcasting the photobooth against a wall, while some printed (already taken) photos will be placed around the rest of the room for viewers to view while they wait.

A key component in making this exhibit understandable will be in the experience of participating. By actively participating in this hands on exhibit, viewers will reevaluate their relationship experience and communication with art.

example of museum space

http://www.advancedscientificconcepts.c ... -FINAL.pdf

http://www.youtube.com/watch?v=wRpjIxQg ... re=related

example of a LiDAR's normal use

http://lidarservices.com/lsi_technology/

Radiohead's House of Cards

http://www.youtube.com/watch?v=8nTFjVm9sTQ

an example of LiDAR Video Technology.

Description: My exhibit will use 3D LiDAR vision technology to capture photos of the museum goers. I will then juxtapose the photos of the viewers with photos of well-known artworks/landscapes in an effort to coerce viewers to become active participants of the art, and not just passive stand by-ers.

Vision Technology Researched:

My final project will focus on 3D LiDAR vision technology. LiDAR (Light Detection and Ranging) uses remote sensing technology to illuminate a desired subject with pulsing light from a laser. Data that is collected from this remote sensing is then used to create a composite image. Though LiDAR technology is not currently being used at UCSB, it is being used at Santa Barbara’s very own Raytheon Corporation. Raytheon has produced a range of 3D Flash LiDAR cameras, their most recent one being TigerEye. 3D LiDAR technology captures X, Y, and Z coordinates to create accurate three dimensional visualization. This unique process allows people to manipulate an image in ways that 2D images cannot be manipulated (i.e stripping layers of the image, see second youtube video). The LiDAR combines GPS tracking, remote sensing, laser imaging, and Inertial Measurement Units (IMU) to create accurate geospatial visualizations of information. 3D LiDAR technology is used across different fields such as archaeology, architecture, physics, and even in the military, however, for the purposes of this project I will use the LiDAR for artistic means and purposes.

LiDAR imaging's normal application

Translation into Art/Museum Presentation:

For my art exhibition, I am proposing an interactive exhibit where viewers will become participators that can manipulate the technology and image of their choosing for themselves. Participators will have the option of having their photo taken by a range of 3D LiDAR cameras, upon which the 3D visualization will be projected onto a photo of a realistic landscape of their choosing (via a computer that will be available for use). If the participators do not wish to have their photo taken, a model will be on standby to keep the presentation continuous. Then, the viewer will be allowed to manipulate variables of their portrait such as color, size, and layers and once they are finished, the image will be stored as a part of a series on a web database. There will be two stations, one for people to get photographed in and another for the manipulation process. Meanwhile, the portraits will be projected against a wall in a museum. Art exhibits are normally about the viewer and the artwork(s) that are being viewed. There is a separation that is apparent between the viewer and the viewed, as well as what is real (the viewer) and what is not(the artwork). By projecting a 3D LiDAR image of the viewers on to realistic backgrounds (i.e. a photo of the Eiffel tower or a well known painting), this separation will become reversed. Instead, the viewer will become a part of the art installation and there will be a reversal of what is considered real and what is not, as well as forced to become an active viewer. This exhibit will also challenge viewers to think about voyeurism, and will place them in the position of both the viewed and the viewer.

example of what people look like when thier photos are taken by a 3D LiDAR camera.

http://www.youtube.com/watch?v=Tc9c2k2eZ-4

another example, differences in color

imagine juxtaposed over a well-known, relatively flat image (below) and projected for the rest of the viewers to see/to create one composite image.

In terms of space, the exhibit will be in the main room of the museum. I will set up the photo booth and the photo-manipulation booth on one side of the museum, while a projector will be placed on the ceiling and projecting and live broadcasting the photobooth against a wall, while some printed (already taken) photos will be placed around the rest of the room for viewers to view while they wait.

A key component in making this exhibit understandable will be in the experience of participating. By actively participating in this hands on exhibit, viewers will reevaluate their relationship experience and communication with art.

example of museum space

http://www.advancedscientificconcepts.c ... -FINAL.pdf

http://www.youtube.com/watch?v=wRpjIxQg ... re=related

example of a LiDAR's normal use

http://lidarservices.com/lsi_technology/

Radiohead's House of Cards

http://www.youtube.com/watch?v=8nTFjVm9sTQ

an example of LiDAR Video Technology.

Last edited by slpark on Wed Dec 05, 2012 12:17 pm, edited 3 times in total.

-

andysantoyo

- Posts: 6

- Joined: Mon Oct 01, 2012 4:06 pm

Re: Wk10 - Final Project presentation Here

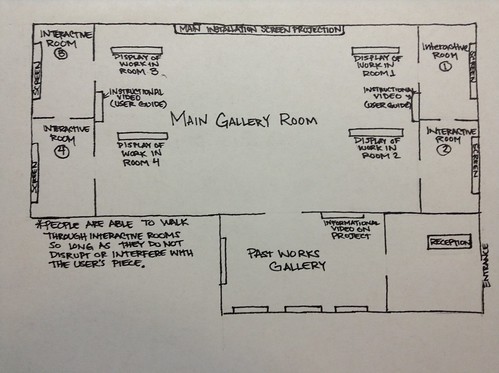

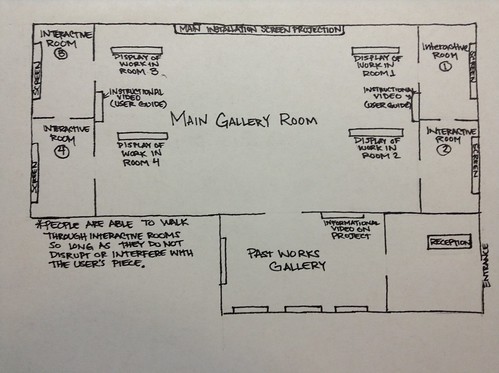

Interactive Wall-Sized Screens

As I have researched these past few weeks on the work being done at Sato Laboratories in Japan, where they are creating an interactive database system for communities. These databases are very similar to a community bulletin board where information and news is stored onto and available for people to be updated about. It is interactive in the sense that the person, or user, who is able to log in through their smartphone by scanning a bar code on the interactive screen. With the database at their disposal, they are allowed to swiftly use hand movements that are recognized by a camera and carried out onto the screen for whatever use. Users are able to add to the bulletin board by adding to it, or publishing other messages, which would be maintained, since not all information/things posted may be adequate to publish on it.

Translating this into an art form, I wish to instead use it as an interactive piece of technology, which instead of publishing information, lets people become an artist by submitting their own art. The people who choose to interact with the technology will do so by being able to choose what form of art they choose to make within a drawing, painting, or sculpture. It being 3D has some advantages in that it gives depth to work, which is why I also added sculptures to the body of work that the audience can choose to make. Not unlike holographic images where the piece displayed gives a full 360 degree view, in a sense the hand gestures will allow users to “move” the piece in order to construct their sculpture. Similarly a drawing can also be made multi-dimensional, if so desired, in that can become sort of like a schematic drawing, with a 3 dimensional feel. It is similar with painting; paintings also give depth, and much like in TED talk where from a painting they were able to gather information on that space, it can be so on the painting done. Since it is based on hand movement recognition, anything done by the user's hands will be recognized; say that person wants to pull in, enlarge, pull out, decrease size it does so by doing similar gestures, much like the tech of touch screen technology. User's will have to keep in mind that since the camera recognizes movement in frames, the speed and the movements should not get too complicated, since the camera has limits as well.

As an exhibition space to display this piece, I would like it to be in a space similar to the grand room in UCSB's art museum, without the middle wall that blocks the full view of the wall from the entrance. Reason for this is so that towards the end of the show the database of art pieces can display every users work, much like a slideshow. I want a total of six interactive screens that will be displayed in placed in different rooms. In the main room would be screens that display previous works done so as to give people a sense of the work that can be done, with a touch of their own creativity, and to keep them occupied while people are using the tech in the rooms. Other screens will be placed throughout the main room which display either the work being done in the rooms or past work done.

I realize that most of the work that is being allowed to be done by this technology is time consuming, especially for an audience of who knows how many decide to attend, which is why a time limit could be imposed to give people a chance to use it. The experience is entirely in how and what the user decides to create. I want them to use with their entire potential with the device in order to see and experience that technology has advanced and that even such small gestures and actions can produce great things, as seen with touch technology being produced, so long as everyone collaborates to create. One should really think about this technology in the way that it has been sort of perceived like in futuristic movies that produce holographic images and allow one to 'touch' it, much like in Iron Man, in which Tony Stark does so in his home. Touch based technology isn't so far from letting people create things in simple forms, with this work it is more interactive and that in a sense makes it unique. Our perception of space and even artwork is changing thanks to technological developments; this work is already a step towards changing how and with what people will continue to do art work with, or how it changes the context and what benefits it has on not having to use actual equipment to make an art piece. If a painting, drawing, or sculpture is done within 3D space, would it still be considered a such? Or does it become something more?

http://www.hci.iis.u-tokyo.ac.jp/en/res ... splay.html

http://www.hci.iis.u-tokyo.ac.jp/assets ... -PPD08.pdf

http://blog.grove.com/a-step-closer-to- ... l-display/

http://www.redmondpie.com/when-will-we- ... eal-world/

http://www.mymodernmet.com/profiles/blo ... rks-hitech

As I have researched these past few weeks on the work being done at Sato Laboratories in Japan, where they are creating an interactive database system for communities. These databases are very similar to a community bulletin board where information and news is stored onto and available for people to be updated about. It is interactive in the sense that the person, or user, who is able to log in through their smartphone by scanning a bar code on the interactive screen. With the database at their disposal, they are allowed to swiftly use hand movements that are recognized by a camera and carried out onto the screen for whatever use. Users are able to add to the bulletin board by adding to it, or publishing other messages, which would be maintained, since not all information/things posted may be adequate to publish on it.

Translating this into an art form, I wish to instead use it as an interactive piece of technology, which instead of publishing information, lets people become an artist by submitting their own art. The people who choose to interact with the technology will do so by being able to choose what form of art they choose to make within a drawing, painting, or sculpture. It being 3D has some advantages in that it gives depth to work, which is why I also added sculptures to the body of work that the audience can choose to make. Not unlike holographic images where the piece displayed gives a full 360 degree view, in a sense the hand gestures will allow users to “move” the piece in order to construct their sculpture. Similarly a drawing can also be made multi-dimensional, if so desired, in that can become sort of like a schematic drawing, with a 3 dimensional feel. It is similar with painting; paintings also give depth, and much like in TED talk where from a painting they were able to gather information on that space, it can be so on the painting done. Since it is based on hand movement recognition, anything done by the user's hands will be recognized; say that person wants to pull in, enlarge, pull out, decrease size it does so by doing similar gestures, much like the tech of touch screen technology. User's will have to keep in mind that since the camera recognizes movement in frames, the speed and the movements should not get too complicated, since the camera has limits as well.

As an exhibition space to display this piece, I would like it to be in a space similar to the grand room in UCSB's art museum, without the middle wall that blocks the full view of the wall from the entrance. Reason for this is so that towards the end of the show the database of art pieces can display every users work, much like a slideshow. I want a total of six interactive screens that will be displayed in placed in different rooms. In the main room would be screens that display previous works done so as to give people a sense of the work that can be done, with a touch of their own creativity, and to keep them occupied while people are using the tech in the rooms. Other screens will be placed throughout the main room which display either the work being done in the rooms or past work done.

I realize that most of the work that is being allowed to be done by this technology is time consuming, especially for an audience of who knows how many decide to attend, which is why a time limit could be imposed to give people a chance to use it. The experience is entirely in how and what the user decides to create. I want them to use with their entire potential with the device in order to see and experience that technology has advanced and that even such small gestures and actions can produce great things, as seen with touch technology being produced, so long as everyone collaborates to create. One should really think about this technology in the way that it has been sort of perceived like in futuristic movies that produce holographic images and allow one to 'touch' it, much like in Iron Man, in which Tony Stark does so in his home. Touch based technology isn't so far from letting people create things in simple forms, with this work it is more interactive and that in a sense makes it unique. Our perception of space and even artwork is changing thanks to technological developments; this work is already a step towards changing how and with what people will continue to do art work with, or how it changes the context and what benefits it has on not having to use actual equipment to make an art piece. If a painting, drawing, or sculpture is done within 3D space, would it still be considered a such? Or does it become something more?

http://www.hci.iis.u-tokyo.ac.jp/en/res ... splay.html

http://www.hci.iis.u-tokyo.ac.jp/assets ... -PPD08.pdf

http://blog.grove.com/a-step-closer-to- ... l-display/

http://www.redmondpie.com/when-will-we- ... eal-world/

http://www.mymodernmet.com/profiles/blo ... rks-hitech

Last edited by andysantoyo on Tue Dec 04, 2012 8:05 am, edited 5 times in total.

Re: Wk10 - Final Project presentation Here

Marie Sester is a French-American artist working primarily with digital technologies to create works that take on many forms but ultimately study ideological structures of how culture, politics and technology affect our spatial awareness in the world.